Difference between revisions of "EJFAT UDP Transmission Performance"

| Line 250: | Line 250: | ||

<font size="+1">3rd level down performance are nodes 8, 9, 12, 13, 14, 15, or cores:</font> | <font size="+1">3rd level down performance are nodes 8, 9, 12, 13, 14, 15, or cores:</font> | ||

| + | |||

'''64-79, 96-127''' | '''64-79, 96-127''' | ||

| − | <font size="+1"> | + | |

| + | <font size="+1">4th level down performance are nodes 1-7, or cores:</font> | ||

| + | |||

'''0-63''' | '''0-63''' | ||

Latest revision as of 21:01, 7 September 2022

UDP Performance Overview

This page is dedicated to researching methods to maximize reliable UDP transmission rates between nodes.

Transmission between indra-s1 and indra-s2

The following tests were run with 1 sender and 1 receiver. The sender was the packetBlaster program whose help gives the following output:

usage: ./packetBlaster

[-h] [-v] [-ip6] [-sendnocp]

[-bufdelay] (delay between each buffer, not packet)

[-host <destination host (defaults to 127.0.0.1)>]

[-p <destination UDP port>]

[-i <outgoing interface name (e.g. eth0, currently only used to find MTU)>]

[-mtu <desired MTU size>]

[-t <tick>]

[-ver <version>]

[-id <data id>]

[-pro <protocol>]

[-e <entropy>]

[-b <buffer size>]

[-s <UDP send buffer size>]

[-cores <comma-separated list of cores to run on>]

[-tpre <tick prescale (1,2, ... tick increment each buffer sent)>]

[-dpre <delay prescale (1,2, ... if -d defined, 1 delay for every prescale pkts/bufs)>]

[-d <delay in microsec between packets>]

EJFAT UDP packet sender that will packetize and send buffer repeatedly and get stats

By default, data is copied into buffer and "send()" is used (connect is called).

Using -sendnocp flag, data is sent using "send()" (connect called) and data copy minimized, but original data buffer changed

The blaster was sending 89kB buffers in 10 packets from Indra-s1 to the load balancer (129.57.109.254 / 19522) with mtu = 9000.

The sending thread was NOT tied to any specific core. And finally, the entropy and id are the same (0):

./packetBlaster -host 129.57.109.254 -p 19522 -mtu 9000 -ver 2 -sendnocp -t 0 -id 0 -e 0 -b 89000

The receiver was the packetBlastee program whose help gives the following output:

usage: ./packetBlastee

[-h] [-v] [-ip6]

[-a <listening IP address (defaults to INADDR_ANY)>]

[-p <listening UDP port>]

[-b <internal buffer byte sizez>]

[-r <UDP receive buffer byte size>]

[-f <file for stats>]

[-cores <comma-separated list of cores to run on>]

[-tpre <tick prescale (1,2, ... expected tick increment for each buffer)>]

This is an EJFAT UDP packet receiver made to work with packetBlaster.

The blastee was receiving on Indra-s2. Initially the receiving thread was NOT tied to any specific core.

This program is able to track the number of dropped packets and to make sure this stat is accurate,

the value given to the -dpre command line option must be identical for both sender & receiver. This

ensures that the receiver knows which tick is coming next.

./packetBlastee -p 17750

Speed test were done with the packetBlaster and packetBlastee giving the following results::

The highest observed average data rate was 4.0GB/sec.

Terminals

Running 1 sender and 1 receiver at a time, of multiple terminals running on s1 and s2, some pairs produce the highest transfer rate and some do not.

How that is determined by the operating system is a mystery at this point. Sometimes, to get the highest rate, a sending/receivering pair need to be run,

killed, and restarted a number of times. Again, why this is so is a mystery. If a sender/receiver are not running at 4GB/s, then they generally run at 3.3GB/s.

Sending Thread

The operating system always did the best job of selecting where the packerBlaster was run.

If a sending thread was tied to 1 or more cores, then performance diminished noticeably. The top transfer rate became 3.5GB/s.

Receiving Thread

Generally, running at the top transfer rate meant dropping packets on occasion. However:

eliminating dropped packets could be achieved by tying the reassembly thread of a receiver to a single core.

This did not improve top speed but did eliminate dropped packets. Note that tying it to multiple cores always diminished performance considerably.

In the case of tying to 2 cores, the received rate drops to almost 1/2 the sending rate. The advantage of tying reassembly to a single core comes with an important caveat.

It's dependent on the ksoftirqd thread.

ksoftirqd Thread(s)

ksoftirqds are kernel threads driving soft IRQs, things like NET_TX_SOFTIRQ and NET_RX_SOFTIRQ.

These are implemented separate from the actual interrupt handlers in the device drivers where latency is critical.

An actual interrupt handler, or hardware IRQ, for a network card is concerned with getting data to/from the device as quickly as possible.

It doesn't know anything about TCP/IP processing. It knows about its bus handling (say PCI), its card specifics (ring buffers, control/config registers),

DMA, and a bit about Ethernet. It handles/receives packets (skbufs to be exact) through queues to/from ksoftirq threads.

By splitting the workload the kernel doesn't try and achieve everything in the actual hardware IRQ while other IRQs are potentially masked.

If the linux kernel is > 2.4.2, a NAPI ("New API") compliant network driver should be available and one of the things such a driver can support is interrupt mitigation.

If the packet rate becomes too high a NAPI driver can turn off interrupts (which are fairly expensive - one per packet) and go into a polling mode.

However the driver needs to support this.

Questions: How do we know if s2's network driver is NAPI enabled?

If it isn't: Can we get a NIC for s2 that is NAPI enabled?

If not tied to a core, the reassembler will drop packets if the ksoftirqd thread consumes 5% or more of a cpu (according to "top").

If tied to a single core, no packets are dropped even if the ksoftirqd thread goes up to 7% cpu with receiver at around 88%.

if the kernel thread's usage jumps to 10% or higher (even to 100%), it kills performance and nothing good happens.

why this thread sometimes takes up so much cpu while at other times it doesn't is still a great mystery. As it is why it can take several tries to get things started correctly.

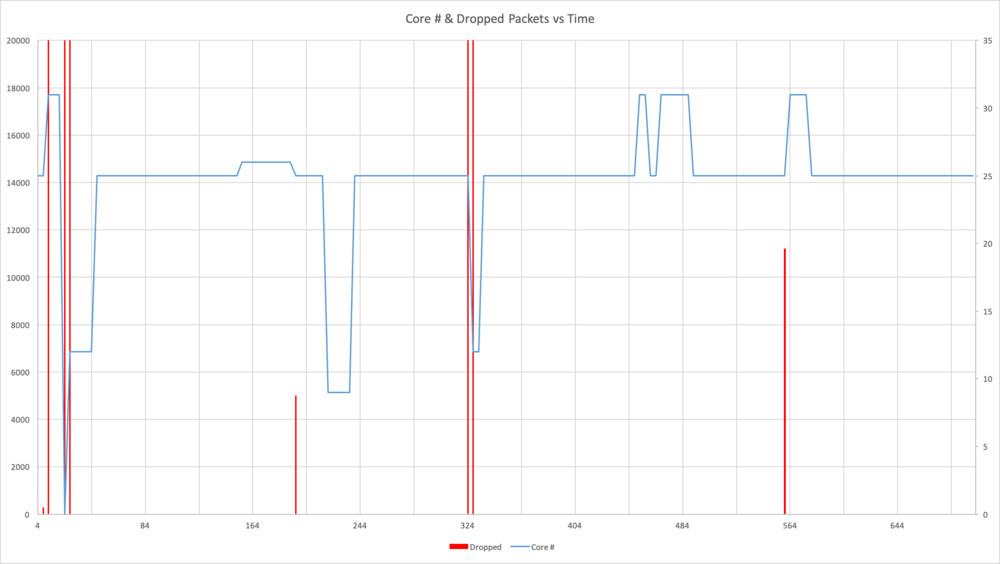

Switching core of reassembly thread correlates to lost packets

In the graph below, the dropped packets as seen in red, occur when switched to a different core (blue).

Packets are not always lost in a switch, but when packets are lost, there was always a switch.

The right vertical scale is Core #, the left is number of dropped packets. The horizontal scale is seconds.

Transmission between ejfat-2 and U280 on ejfat-1 (Sep 2022)

We can find the NUMA node number of ejfat-2's NIC by looking at the output of:

cat /sys/class/net/enp193s0f1np1/device/numa_node

Which is:

10

To find out more info about the cores and NUMA node numbers of ejfat-2. Look at the output of:

numactl --hardware

Which is:

available: 16 nodes (0-15) node 0 cpus: 0 1 2 3 4 5 6 7 node 0 size: 32068 MB node 0 free: 31458 MB node 1 cpus: 8 9 10 11 12 13 14 15 node 1 size: 32250 MB node 1 free: 31564 MB node 2 cpus: 16 17 18 19 20 21 22 23 node 2 size: 32252 MB node 2 free: 31897 MB node 3 cpus: 24 25 26 27 28 29 30 31 node 3 size: 32251 MB node 3 free: 31748 MB node 4 cpus: 32 33 34 35 36 37 38 39 node 4 size: 32252 MB node 4 free: 31948 MB node 5 cpus: 40 41 42 43 44 45 46 47 node 5 size: 32251 MB node 5 free: 31923 MB node 6 cpus: 48 49 50 51 52 53 54 55 node 6 size: 32252 MB node 6 free: 31484 MB node 7 cpus: 56 57 58 59 60 61 62 63 node 7 size: 32239 MB node 7 free: 31734 MB node 8 cpus: 64 65 66 67 68 69 70 71 node 8 size: 32252 MB node 8 free: 31949 MB node 9 cpus: 72 73 74 75 76 77 78 79 node 9 size: 32215 MB node 9 free: 31886 MB node 10 cpus: 80 81 82 83 84 85 86 87 node 10 size: 32252 MB node 10 free: 30250 MB node 11 cpus: 88 89 90 91 92 93 94 95 node 11 size: 32251 MB node 11 free: 31792 MB node 12 cpus: 96 97 98 99 100 101 102 103 node 12 size: 32252 MB node 12 free: 31752 MB node 13 cpus: 104 105 106 107 108 109 110 111 node 13 size: 32251 MB node 13 free: 31541 MB node 14 cpus: 112 113 114 115 116 117 118 119 node 14 size: 32252 MB node 14 free: 31567 MB node 15 cpus: 120 121 122 123 124 125 126 127 node 15 size: 32241 MB node 15 free: 31568 MB node distance s: node 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 0: 10 11 12 12 12 12 12 12 32 32 32 32 32 32 32 32 1: 11 10 12 12 12 12 12 12 32 32 32 32 32 32 32 32 2: 12 12 10 11 12 12 12 12 32 32 32 32 32 32 32 32 3: 12 12 11 10 12 12 12 12 32 32 32 32 32 32 32 32 4: 12 12 12 12 10 11 12 12 32 32 32 32 32 32 32 32 5: 12 12 12 12 11 10 12 12 32 32 32 32 32 32 32 32 6: 12 12 12 12 12 12 10 11 32 32 32 32 32 32 32 32 7: 12 12 12 12 12 12 11 10 32 32 32 32 32 32 32 32 8: 32 32 32 32 32 32 32 32 10 11 12 12 12 12 12 12 9: 32 32 32 32 32 32 32 32 11 10 12 12 12 12 12 12 10: 32 32 32 32 32 32 32 32 12 12 10 11 12 12 12 12 11: 32 32 32 32 32 32 32 32 12 12 11 10 12 12 12 12 12: 32 32 32 32 32 32 32 32 12 12 12 12 10 11 12 12 13: 32 32 32 32 32 32 32 32 12 12 12 12 11 10 12 12 14: 32 32 32 32 32 32 32 32 12 12 12 12 12 12 10 11 15: 32 32 32 32 32 32 32 32 12 12 12 12 12 12 11 10

From this info we see that sending data over the NIC should fastest on node #10 - the same one servicing the NIC.

This means that the best performing cores should be:

80 81 82 83 84 85 86 87

The next level down in performance should be node 11, or cores:

88 89 90 91 92 93 94 95

3rd level down performance are nodes 8, 9, 12, 13, 14, 15, or cores:

64-79, 96-127

4th level down performance are nodes 1-7, or cores:

0-63