Difference between revisions of "EJFAT Technical Design Overview"

| (31 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

= Abstract = | = Abstract = | ||

| − | We describe | + | We describe an engineering collaboration between Energy Sciences Network (ESnet) and Thomas Jefferson National Laboratory (JLab) for proof of concept engineering for an ''accelerated'' load balancer (LB) for servicing Data Acquisition/Production (DAQ) systems using dynamic IP4/6 address forwarding. ''Dynamic'' because the forwarding address is chosen dynamically based on near real-time destination workload conditions, and ''accelerated'' because the forwarding is accomplished with low ''fixed'' latency at line rates of up to 200Gbps per Field Programmable Gate Array (FPGA,) where in general a functioning LB may consist of up to four FPGAs acting as one logical ''Data Plane'' (DP) for a total bandwidth approaching 1 Tbps. |

| − | |||

= EJFAT Overview = | = EJFAT Overview = | ||

| − | This collaboration between ESnet and JLab for FPGA Accelerated Transport (EJFAT) seeks | + | This collaboration between ESnet and JLab for FPGA Accelerated Transport (EJFAT) seeks a capability to dynamically redirect UDP traffic based on endpoint feedback. The commonly aggregation tagged packets go to a dynamically determined single IP and subordinate substream tagged packets within a particular aggregation tag go to individual ports of the selected IP. |

| − | EJFAT will add meta-data to UDP data streams to be used both by | + | EJFAT will add meta-data to UDP data packet streams to be used both by |

<ul> | <ul> | ||

| − | <li>the intervening FPGA, acting as | + | <li>the intervening FPGA based DP, acting as an UDP/IP redirecting work Load Balancer (LB), of data packets from multiple input streams sharing a common aggregation tag to a dynamically selected single IP endpoint |

| − | <li>an endpoint Reassembly Engine (RE) to perform | + | <li>an endpoint Reassembly Engine (RE) to perform required reassembly at the endpoint of the individual input streams bearing a subordinate sub-stream id tag to facilitate synthesizing a ''data event''. |

</ul> | </ul> | ||

| − | The | + | The aggregation/reassembly meta-data included in the source data header in the payload is generic in design and can be utilized for scientific instrument originating streamed data or more conventional sources (e.g., stored/replayed data) to a back-end compute farm. |

| + | |||

| + | In triggered readout systems, the aggregation tag is normally associated with a physics or other ''phenomenological'' event, and is often a timestamp index. In streaming readout (SRO) systems, the aggregation tag is more arbitrary and will likely be a sequential time window index. In the SRO case, phenomenological events will like span aggregation tags, but would be expected to be in nearest neighbor tags. | ||

| − | + | Data producers send meta-data tagged UDP data to a well known DP IP/port and the LB Control Plane (CP), a software agent not necessarily co-located with the DP, is the LB interface for data consumers. Consumers use a publish-subscribe protocol to make an unsolicited announcement of their capacity for work to the CP with frequent updates, thus the back end processing resources are of arbitrary composition and free to span facilities and scale as desired/directed. | |

| − | + | The principle functions of the CP are to manage subscriptions/withdrawals of consuming resources and dynamically apportion data events to subscribed nodes according to their frequently changing relative capacity to receive new work. | |

| − | As the | + | The secure connection between the DAQ and LB can be integrated once, regardless of the final selection of computational facilities. As well, computational facilities do not get any work pushed into them, instead they dynamically register and |

| + | withdraw processing resources for service with the LB. This integration is also done once between the LB and the compute center, rather than once per experiment or DAQ facility. | ||

= Data Source Processing = | = Data Source Processing = | ||

| − | Any data source wishing to take advantage of the EJFAT Load Balancer e.g., the Read Out Controllers (ROC) of the JLab DAQ system, must be prepared to stream data via UDP | + | Any data source wishing to take advantage of the EJFAT Load Balancer e.g., the Read Out Controllers (ROC) of the JLab DAQ system, must be prepared to stream data via UDP by including additional meta-data prepended to the actual UDP payload. |

| + | |||

| + | Additionally, optionally randomizing the UDP header ''source port'' will induce LAG switch entropy at the front edge and optimize traffic flow through the network fabric to the destination. | ||

| + | |||

| + | This new meta-data, populated by the data source, consists of two parts; the first for the LB and the second for the RE: | ||

<ul> | <ul> | ||

| − | <li>the LB to route all UDP packets with a common ''' | + | <li>the LB to route all UDP packets with a common '''aggregation tag''' value to a single destination endpoint |

| − | <li>the destination | + | <li>the destination RE to reassemble packets with a common aggregation tag into proper sequence for each substream or ''channel'' within the overarching aggregation tag. |

</ul> | </ul> | ||

| − | + | The LB meta-data, processed by the LB, is to be in '''''network'' or ''big endian'' order'''. The rest of the data including the RE meta-data can be formatted at the discretion of the EJFAT application. | |

| − | |||

| − | The LB meta-data, processed by the LB, is to be in '''''network'' or ''big endian'' order'''. The rest of the data including the RE | ||

| − | |||

| − | |||

== Load Balancer Meta-Data == | == Load Balancer Meta-Data == | ||

| − | The LB meta-data | + | The LB meta-data is 128 bits that consists of two 64 bit words: |

<ul> | <ul> | ||

| − | <li>'''LB Control Word''' | + | <li>'''LB Control Word''' bits 0-63 such that |

<ul> | <ul> | ||

| − | <li>bits 0-7 ASCII character ’L’</li> | + | <li>bits 0-7 the 8 bit ASCII character ’L’ = 76 = 0x4C</li> |

| − | <li>bits 8-15 ASCII character ’B’</li> | + | <li>bits 8-15 the 8 bit ASCII character ’B’ = 66 = 0x42</li> |

| − | <li>bits 16-23 LB | + | <li>bits 16-23 the 8 bit LB ''version number'' starting at 1 (constant for run duration): '''Currently = 2'''.</li> |

| − | <li>bits 24-31 | + | <li>bits 24-31 the 8 bit ''Protocol Number'' (very useful for protocol decoders e.g., wireshark/tshark )</li> |

| − | <li>bits 32-47 | + | <li>bits 32-47 ''Reserved'' </li> |

| − | <li>bits 48-63 '' | + | <li>bits 48-63 an unsigned 16 bit ''Channel'' or substream (e.g., ROC id) value for destination port selection</li> |

| − | </ul | + | </ul> |

| − | <li>''' | + | <li>'''Aggregation Control Word''' bits 64-127 an unsigned 64 bit ''aggregation tag'' or ''tick'' that for the duration of an experiment data transfer session</li> |

<ul> | <ul> | ||

<li>Monotonically increases</li> | <li>Monotonically increases</li> | ||

| Line 54: | Line 56: | ||

<li>Never rolls over</li> | <li>Never rolls over</li> | ||

<li>Never resets</li> | <li>Never resets</li> | ||

| − | <li>Serves as | + | <li>Serves as the top level aggregation tag across packets.</li> |

| − | </ul | + | </ul> |

</ul> | </ul> | ||

| Line 63: | Line 65: | ||

0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 | 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 | ||

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

| − | | L | + | | 'L' | 'B' | Version | Protocol | |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

3 4 5 6 | 3 4 5 6 | ||

2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 | 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 | ||

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

| − | | Rsvd | | + | | Rsvd | Channel | |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

6 12 | 6 12 | ||

| Line 79: | Line 81: | ||

</pre> | </pre> | ||

| − | The value of the ''' | + | The value of the ''Aggregation Tag'', ''Event ID'', or simply ''Tick'' field is populated by the data source and the '''LB data plane''' redirects all UDP packets with a shared value to a single destination host IP; e.g., for the JLab particle detector triggered DAQ system it would likely be timestamp, otherwise it will be some kind of up-counting index. The '''Channel''' field (bits 48-63) indicates logical channel within the data event (tick) such that channels within an event must be independently reassembled. |

== Reassembly Engine Meta-Data == | == Reassembly Engine Meta-Data == | ||

| − | The '''RE meta-data''' | + | The '''RE meta-data''' is 160 bits and consists of |

<ul> | <ul> | ||

| − | <li>bits 0-3 ''Version'' number</li> | + | <li>bits 0-3 the 4 bit ''Version'' number</li> |

| − | <li>bits 4- | + | <li>bits 4-15 a 12 bit Reserved field</li> |

| − | <li>bit | + | <li>bits 16-31 an unsigned 16 bit ''Data Id''</li> |

| − | <li>bit | + | <li>bits 32-63 an unsigned 32 bit packet buffer ''offset'' byte number from beginning of file (BOF) for reassembly</li> |

| − | <li>bits | + | <li>bits 64-95 an unsigned 32 bit packet buffer total byte length from beginning of file (BOF) for reassembly</li> |

| − | <li>bits | + | <li>bits 96-159 an unsigned 64 bit tick or event number</li> |

</ul> | </ul> | ||

In standard IETF RFC format: | In standard IETF RFC format: | ||

| − | <pre>protocol | + | <pre> |

| − | + | protocol 'Version:4, Rsvd:12, Data-ID:16, Offset:32, Length:32, Tick:64' | |

| − | + | ||

| − | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | + | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ |

| − | | | + | 0 1 2 3 |

| − | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | + | 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 |

| − | | | + | |Version | Rsvd | Data-ID | |

| − | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | + | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ |

| + | bytes 4-7 | ||

| + | | Buffer Offset | | ||

| + | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

| + | bytes 8-11 | ||

| + | | Buffer Length | | ||

| + | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

| + | bytes 12-19 | ||

| + | | | | ||

| + | + Tick + | ||

| + | | | | ||

| + | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | ||

</pre> | </pre> | ||

The '''Data Id''' field is a shared convention between data source and sink and is populated (or ignored) to suite the data transfer / load balance application, e.g., for the JLab particle detector DAQ system it would likely be ROC channel # or proxy. | The '''Data Id''' field is a shared convention between data source and sink and is populated (or ignored) to suite the data transfer / load balance application, e.g., for the JLab particle detector DAQ system it would likely be ROC channel # or proxy. | ||

| − | The ''sequence number'' or optionally data ''offset'' byte number provides the RE with the necessary information to reassemble the transferred data into a meaningful contiguous sequence and is a shared convention between data source and sink. As such, the relationship between ''data_id'', and ''sequence number'' or ''offset'' is undefined and is application specific. In many use cases for example, the ''sequence number'' or ''offset'' will be subordinate to the ''data_id'', i.e., each set of packets with a | + | The ''sequence number'' or optionally data ''offset'' byte number provides the RE with the necessary information to reassemble the transferred data into a meaningful contiguous sequence and is a shared convention between data source and sink. As such, the relationship between ''data_id'', and ''sequence number'' or ''offset'' is undefined and is application specific. In many use cases for example, the ''sequence number'' or ''offset'' will be subordinate to the ''data_id'', i.e., each set of packets with a common ''data_id'' will be individually sequenced as a distinct group from other groups with a different ''data_id'' for a common ''tick'' value. |

| − | Strictly speaking, the RE meta-data is opaque to the LB and therefore considered as part of the payload and is itself therefore a convention between data producer/consumer. | + | '''Strictly speaking, the RE meta-data is opaque to the LB and therefore considered as part of the payload and is itself therefore a convention between data producer/consumer.''' |

The resultant data stream is shown just below the block diagram in Figure X and depicts the stream UDP packet structure from the source data system to the LB. Individual packets are meta-data tagged both for the LB, to route based on '''tick''' to the proper compute node, and for the RE with packet '''offset''' spanning the collection of packets for a single '''tick''' for eventual destination reassembly. | The resultant data stream is shown just below the block diagram in Figure X and depicts the stream UDP packet structure from the source data system to the LB. Individual packets are meta-data tagged both for the LB, to route based on '''tick''' to the proper compute node, and for the RE with packet '''offset''' spanning the collection of packets for a single '''tick''' for eventual destination reassembly. | ||

| − | The depicted sequence is only illustrative, and no assumption about the order of packets with respect to either '''tick''' or '''offset''' | + | The depicted sequence is only illustrative, and no assumption about the order of packets with respect to either '''tick''' or '''offset''' is be made by the LB or should be made the RE. |

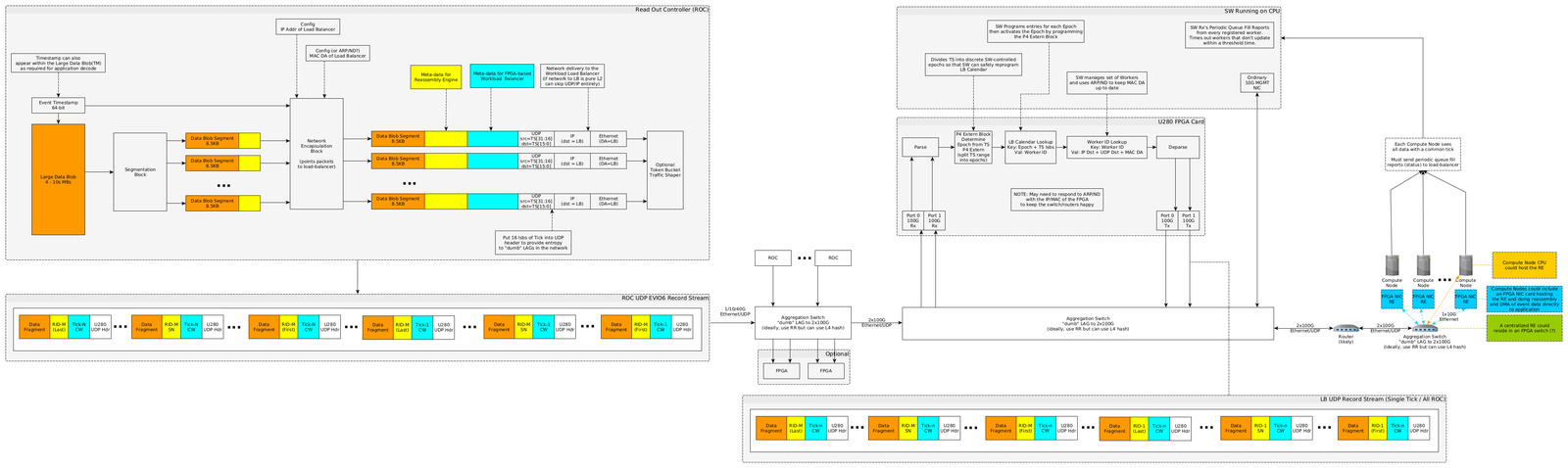

[[File:Esnet-JLab-network-diagram-v002d-roc.png|border|1600px|link=|"Data Source Stream Processing"]] | [[File:Esnet-JLab-network-diagram-v002d-roc.png|border|1600px|link=|"Data Source Stream Processing"]] | ||

= UDP Header = | = UDP Header = | ||

| − | The UDP Header | + | The UDP Header ''Source Port'' field '''should''' be modified/populated as follows for LAG switch entropy: |

| − | + | '''Source Port''' = lower 16 bits of Load Balancer '''Tick''' | |

| − | + | ||

| − | + | An example of how this can be done is available at: | |

| − | + | ||

| + | [https://stackoverflow.com/questions/9873061/how-to-set-the-source-port-in-the-udp-socket-in-c How to set the UDP Source Port in C] | ||

| − | The | + | The UDP Header ''Destination Port'' field '''must''' be modified/populated with a value that indicates the LB should perform load balancing (else packet is discarded) as follows: |

| + | '''Destination Port''' = 'LB' = 0x4c42 | ||

= Data Source Aggregation Switch = | = Data Source Aggregation Switch = | ||

| Line 129: | Line 144: | ||

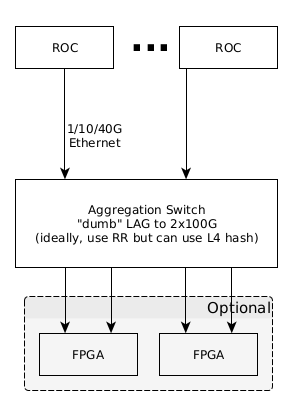

Individual Data Source channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X). | Individual Data Source channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X). | ||

| − | If the LAG configured switch proves to be incapable of meeting line rate throughput ( | + | If the LAG configured switch proves to be incapable of meeting line rate throughput (200Gbs), then an additional FPGA(s) can be engineered to subsume this function as depicted in the Figure below. |

[[File:Esnet-JLab-network-diagram-v002a-roc-1.png|border|"Data Source Channel Load Balancing"]] | [[File:Esnet-JLab-network-diagram-v002a-roc-1.png|border|"Data Source Channel Load Balancing"]] | ||

| Line 136: | Line 151: | ||

The FPGA resident LB aggregates data across all so designated source data channels for a single discrete '''tick''' and routes this aggregated data to individual end compute nodes in cooperation with the FPGA host chassis CPU using algorithms designed for the host CPU and feedback received from the end compute node farm, maintaining complete opacity of the UDP payload to the LB (except for the LB meta-data). | The FPGA resident LB aggregates data across all so designated source data channels for a single discrete '''tick''' and routes this aggregated data to individual end compute nodes in cooperation with the FPGA host chassis CPU using algorithms designed for the host CPU and feedback received from the end compute node farm, maintaining complete opacity of the UDP payload to the LB (except for the LB meta-data). | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

[[File:esnet-JLab-network-diagram-v002d-lb.png|border|1500px|link=|"Load Balancer/Host CPU Processing"]] | [[File:esnet-JLab-network-diagram-v002d-lb.png|border|1500px|link=|"Load Balancer/Host CPU Processing"]] | ||

| − | = | + | = Load Balancer Pipeline API = |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | + | [https://docs.google.com/document/d/1ssw8sye7jExtPCJVejloe8hNkyWOcxEQzVmm45xs5-w/edit#heading=h.b8k68ix2wf30 link] | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | = | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

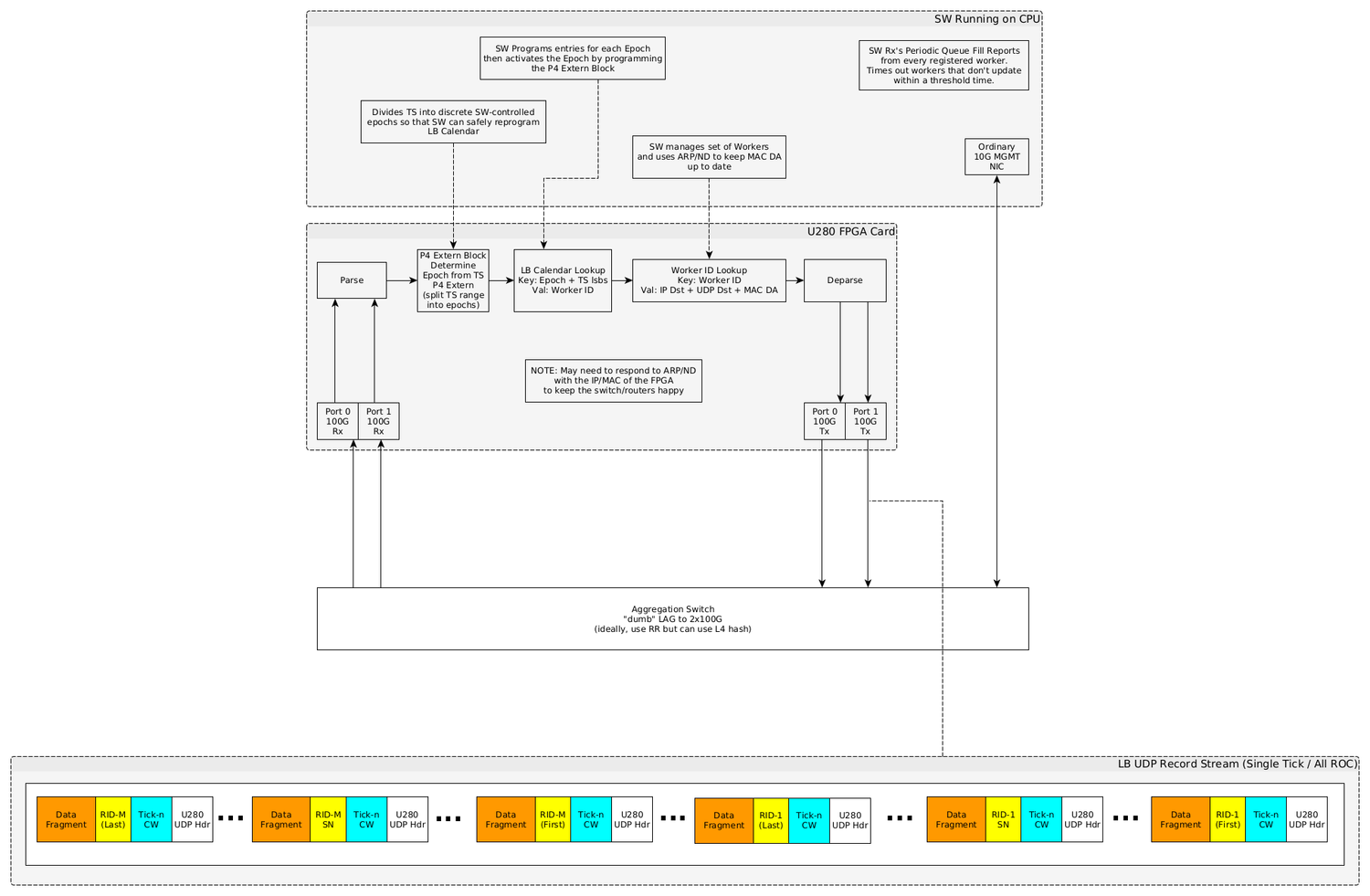

= Appendix: EJFAT Processing = | = Appendix: EJFAT Processing = | ||

[[File:esnet-jlab-network-diagram-v002d.png|border|1600px|link=|"EJFAT Load Balanced Transport"]] | [[File:esnet-jlab-network-diagram-v002d.png|border|1600px|link=|"EJFAT Load Balanced Transport"]] | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

Latest revision as of 12:24, 9 October 2024

Abstract

We describe an engineering collaboration between Energy Sciences Network (ESnet) and Thomas Jefferson National Laboratory (JLab) for proof of concept engineering for an accelerated load balancer (LB) for servicing Data Acquisition/Production (DAQ) systems using dynamic IP4/6 address forwarding. Dynamic because the forwarding address is chosen dynamically based on near real-time destination workload conditions, and accelerated because the forwarding is accomplished with low fixed latency at line rates of up to 200Gbps per Field Programmable Gate Array (FPGA,) where in general a functioning LB may consist of up to four FPGAs acting as one logical Data Plane (DP) for a total bandwidth approaching 1 Tbps.

EJFAT Overview

This collaboration between ESnet and JLab for FPGA Accelerated Transport (EJFAT) seeks a capability to dynamically redirect UDP traffic based on endpoint feedback. The commonly aggregation tagged packets go to a dynamically determined single IP and subordinate substream tagged packets within a particular aggregation tag go to individual ports of the selected IP.

EJFAT will add meta-data to UDP data packet streams to be used both by

- the intervening FPGA based DP, acting as an UDP/IP redirecting work Load Balancer (LB), of data packets from multiple input streams sharing a common aggregation tag to a dynamically selected single IP endpoint

- an endpoint Reassembly Engine (RE) to perform required reassembly at the endpoint of the individual input streams bearing a subordinate sub-stream id tag to facilitate synthesizing a data event.

The aggregation/reassembly meta-data included in the source data header in the payload is generic in design and can be utilized for scientific instrument originating streamed data or more conventional sources (e.g., stored/replayed data) to a back-end compute farm.

In triggered readout systems, the aggregation tag is normally associated with a physics or other phenomenological event, and is often a timestamp index. In streaming readout (SRO) systems, the aggregation tag is more arbitrary and will likely be a sequential time window index. In the SRO case, phenomenological events will like span aggregation tags, but would be expected to be in nearest neighbor tags.

Data producers send meta-data tagged UDP data to a well known DP IP/port and the LB Control Plane (CP), a software agent not necessarily co-located with the DP, is the LB interface for data consumers. Consumers use a publish-subscribe protocol to make an unsolicited announcement of their capacity for work to the CP with frequent updates, thus the back end processing resources are of arbitrary composition and free to span facilities and scale as desired/directed.

The principle functions of the CP are to manage subscriptions/withdrawals of consuming resources and dynamically apportion data events to subscribed nodes according to their frequently changing relative capacity to receive new work.

The secure connection between the DAQ and LB can be integrated once, regardless of the final selection of computational facilities. As well, computational facilities do not get any work pushed into them, instead they dynamically register and withdraw processing resources for service with the LB. This integration is also done once between the LB and the compute center, rather than once per experiment or DAQ facility.

Data Source Processing

Any data source wishing to take advantage of the EJFAT Load Balancer e.g., the Read Out Controllers (ROC) of the JLab DAQ system, must be prepared to stream data via UDP by including additional meta-data prepended to the actual UDP payload.

Additionally, optionally randomizing the UDP header source port will induce LAG switch entropy at the front edge and optimize traffic flow through the network fabric to the destination.

This new meta-data, populated by the data source, consists of two parts; the first for the LB and the second for the RE:

- the LB to route all UDP packets with a common aggregation tag value to a single destination endpoint

- the destination RE to reassemble packets with a common aggregation tag into proper sequence for each substream or channel within the overarching aggregation tag.

The LB meta-data, processed by the LB, is to be in network or big endian order. The rest of the data including the RE meta-data can be formatted at the discretion of the EJFAT application.

Load Balancer Meta-Data

The LB meta-data is 128 bits that consists of two 64 bit words:

- LB Control Word bits 0-63 such that

- bits 0-7 the 8 bit ASCII character ’L’ = 76 = 0x4C

- bits 8-15 the 8 bit ASCII character ’B’ = 66 = 0x42

- bits 16-23 the 8 bit LB version number starting at 1 (constant for run duration): Currently = 2.

- bits 24-31 the 8 bit Protocol Number (very useful for protocol decoders e.g., wireshark/tshark )

- bits 32-47 Reserved

- bits 48-63 an unsigned 16 bit Channel or substream (e.g., ROC id) value for destination port selection

- Aggregation Control Word bits 64-127 an unsigned 64 bit aggregation tag or tick that for the duration of an experiment data transfer session

- Monotonically increases

- Unique

- Never rolls over

- Never resets

- Serves as the top level aggregation tag across packets.

In standard IETF RFC format:

protocol 'L:8,B:8,Version:8,Protocol:8,Reserved:16,Entropy:16,Tick:64' 0 1 2 3 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | 'L' | 'B' | Version | Protocol | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ 3 4 5 6 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | Rsvd | Channel | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ 6 12 4 5 ... ... ... 0 1 2 3 4 5 6 7 +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | | + Tick | | | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

The value of the Aggregation Tag, Event ID, or simply Tick field is populated by the data source and the LB data plane redirects all UDP packets with a shared value to a single destination host IP; e.g., for the JLab particle detector triggered DAQ system it would likely be timestamp, otherwise it will be some kind of up-counting index. The Channel field (bits 48-63) indicates logical channel within the data event (tick) such that channels within an event must be independently reassembled.

Reassembly Engine Meta-Data

The RE meta-data is 160 bits and consists of

- bits 0-3 the 4 bit Version number

- bits 4-15 a 12 bit Reserved field

- bits 16-31 an unsigned 16 bit Data Id

- bits 32-63 an unsigned 32 bit packet buffer offset byte number from beginning of file (BOF) for reassembly

- bits 64-95 an unsigned 32 bit packet buffer total byte length from beginning of file (BOF) for reassembly

- bits 96-159 an unsigned 64 bit tick or event number

In standard IETF RFC format:

protocol 'Version:4, Rsvd:12, Data-ID:16, Offset:32, Length:32, Tick:64'

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

0 1 2 3

0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2

|Version | Rsvd | Data-ID |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

bytes 4-7

| Buffer Offset |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

bytes 8-11

| Buffer Length |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

bytes 12-19

| |

+ Tick +

| |

+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

The Data Id field is a shared convention between data source and sink and is populated (or ignored) to suite the data transfer / load balance application, e.g., for the JLab particle detector DAQ system it would likely be ROC channel # or proxy.

The sequence number or optionally data offset byte number provides the RE with the necessary information to reassemble the transferred data into a meaningful contiguous sequence and is a shared convention between data source and sink. As such, the relationship between data_id, and sequence number or offset is undefined and is application specific. In many use cases for example, the sequence number or offset will be subordinate to the data_id, i.e., each set of packets with a common data_id will be individually sequenced as a distinct group from other groups with a different data_id for a common tick value.

Strictly speaking, the RE meta-data is opaque to the LB and therefore considered as part of the payload and is itself therefore a convention between data producer/consumer.

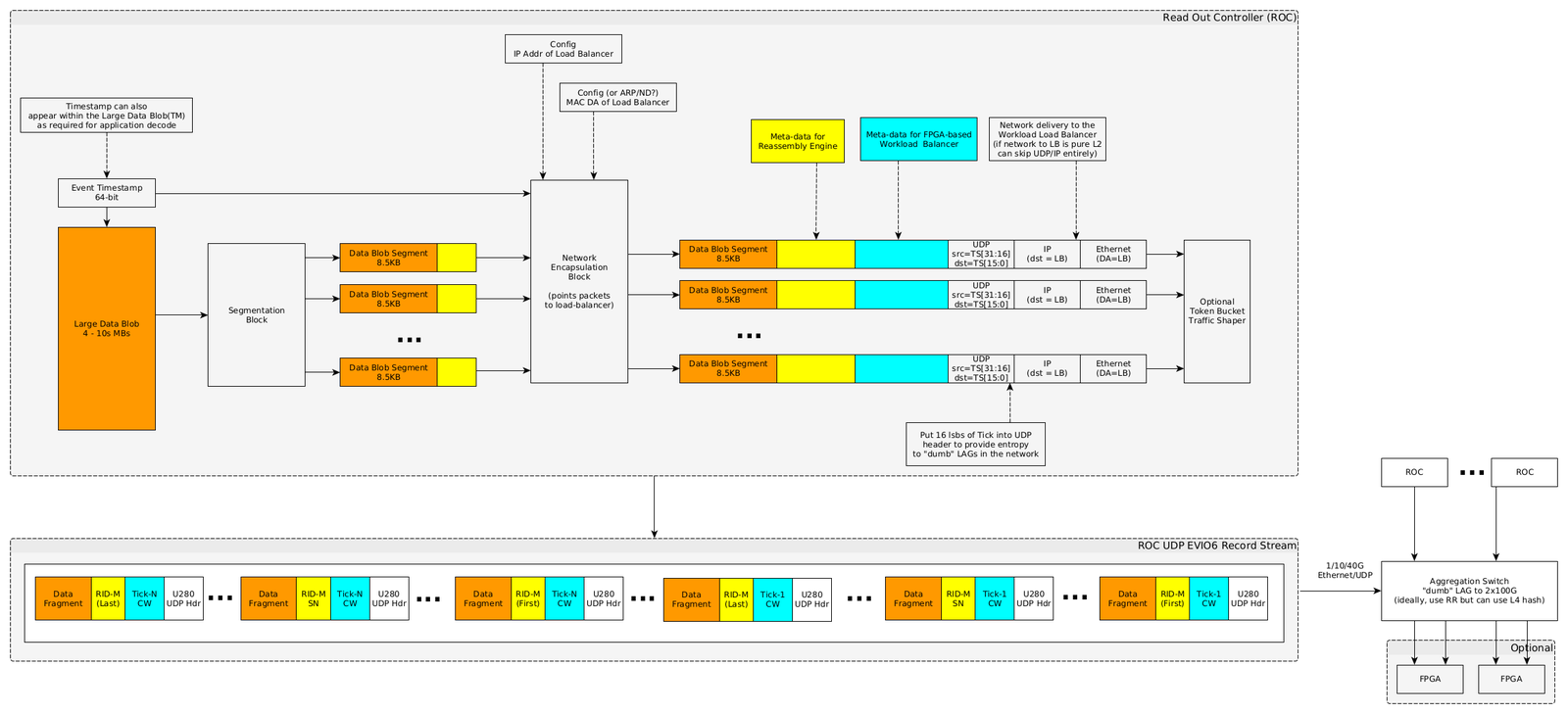

The resultant data stream is shown just below the block diagram in Figure X and depicts the stream UDP packet structure from the source data system to the LB. Individual packets are meta-data tagged both for the LB, to route based on tick to the proper compute node, and for the RE with packet offset spanning the collection of packets for a single tick for eventual destination reassembly.

The depicted sequence is only illustrative, and no assumption about the order of packets with respect to either tick or offset is be made by the LB or should be made the RE.

UDP Header

The UDP Header Source Port field should be modified/populated as follows for LAG switch entropy:

Source Port = lower 16 bits of Load Balancer Tick

An example of how this can be done is available at:

How to set the UDP Source Port in C

The UDP Header Destination Port field must be modified/populated with a value that indicates the LB should perform load balancing (else packet is discarded) as follows:

Destination Port = 'LB' = 0x4c42

Data Source Aggregation Switch

Individual Data Source channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X).

If the LAG configured switch proves to be incapable of meeting line rate throughput (200Gbs), then an additional FPGA(s) can be engineered to subsume this function as depicted in the Figure below.

LB Processing

The FPGA resident LB aggregates data across all so designated source data channels for a single discrete tick and routes this aggregated data to individual end compute nodes in cooperation with the FPGA host chassis CPU using algorithms designed for the host CPU and feedback received from the end compute node farm, maintaining complete opacity of the UDP payload to the LB (except for the LB meta-data).

Load Balancer Pipeline API

Appendix: EJFAT Processing