EJFAT UDP Packet Sending and NUMA Nodes

Transmission between ejfat-2 and U280 on ejfat-1 (Sep 2022)

We can find the NUMA node number of ejfat-2's NIC by looking at the output of:

cat /sys/class/net/enp193s0f1np1/device/numa_node

Which is:

10

To find out more info about the cores and NUMA node numbers of ejfat-2. Look at the output of:

numactl --hardware

Which is:

available: 16 nodes (0-15) node 0 cpus: 0 1 2 3 4 5 6 7 node 0 size: 32068 MB node 0 free: 31458 MB node 1 cpus: 8 9 10 11 12 13 14 15 node 1 size: 32250 MB node 1 free: 31564 MB node 2 cpus: 16 17 18 19 20 21 22 23 node 2 size: 32252 MB node 2 free: 31897 MB node 3 cpus: 24 25 26 27 28 29 30 31 node 3 size: 32251 MB node 3 free: 31748 MB node 4 cpus: 32 33 34 35 36 37 38 39 node 4 size: 32252 MB node 4 free: 31948 MB node 5 cpus: 40 41 42 43 44 45 46 47 node 5 size: 32251 MB node 5 free: 31923 MB node 6 cpus: 48 49 50 51 52 53 54 55 node 6 size: 32252 MB node 6 free: 31484 MB node 7 cpus: 56 57 58 59 60 61 62 63 node 7 size: 32239 MB node 7 free: 31734 MB node 8 cpus: 64 65 66 67 68 69 70 71 node 8 size: 32252 MB node 8 free: 31949 MB node 9 cpus: 72 73 74 75 76 77 78 79 node 9 size: 32215 MB node 9 free: 31886 MB node 10 cpus: 80 81 82 83 84 85 86 87 node 10 size: 32252 MB node 10 free: 30250 MB node 11 cpus: 88 89 90 91 92 93 94 95 node 11 size: 32251 MB node 11 free: 31792 MB node 12 cpus: 96 97 98 99 100 101 102 103 node 12 size: 32252 MB node 12 free: 31752 MB node 13 cpus: 104 105 106 107 108 109 110 111 node 13 size: 32251 MB node 13 free: 31541 MB node 14 cpus: 112 113 114 115 116 117 118 119 node 14 size: 32252 MB node 14 free: 31567 MB node 15 cpus: 120 121 122 123 124 125 126 127 node 15 size: 32241 MB node 15 free: 31568 MB node distance s: node 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 0: 10 11 12 12 12 12 12 12 32 32 32 32 32 32 32 32 1: 11 10 12 12 12 12 12 12 32 32 32 32 32 32 32 32 2: 12 12 10 11 12 12 12 12 32 32 32 32 32 32 32 32 3: 12 12 11 10 12 12 12 12 32 32 32 32 32 32 32 32 4: 12 12 12 12 10 11 12 12 32 32 32 32 32 32 32 32 5: 12 12 12 12 11 10 12 12 32 32 32 32 32 32 32 32 6: 12 12 12 12 12 12 10 11 32 32 32 32 32 32 32 32 7: 12 12 12 12 12 12 11 10 32 32 32 32 32 32 32 32 8: 32 32 32 32 32 32 32 32 10 11 12 12 12 12 12 12 9: 32 32 32 32 32 32 32 32 11 10 12 12 12 12 12 12 10: 32 32 32 32 32 32 32 32 12 12 10 11 12 12 12 12 11: 32 32 32 32 32 32 32 32 12 12 11 10 12 12 12 12 12: 32 32 32 32 32 32 32 32 12 12 12 12 10 11 12 12 13: 32 32 32 32 32 32 32 32 12 12 12 12 11 10 12 12 14: 32 32 32 32 32 32 32 32 12 12 12 12 12 12 10 11 15: 32 32 32 32 32 32 32 32 12 12 12 12 12 12 11 10

From this info we see that sending data over the NIC should fastest on node #10 - the same one servicing the NIC.

This means that the best performing cores should be:

80 81 82 83 84 85 86 87

The next level down in performance should be node 11, or cores:

88 89 90 91 92 93 94 95

3rd level down performance are nodes 8, 9, 12, 13, 14, 15, or cores:

64-79, 96-127

4th level down performance are nodes 1-7, or cores:

0-63

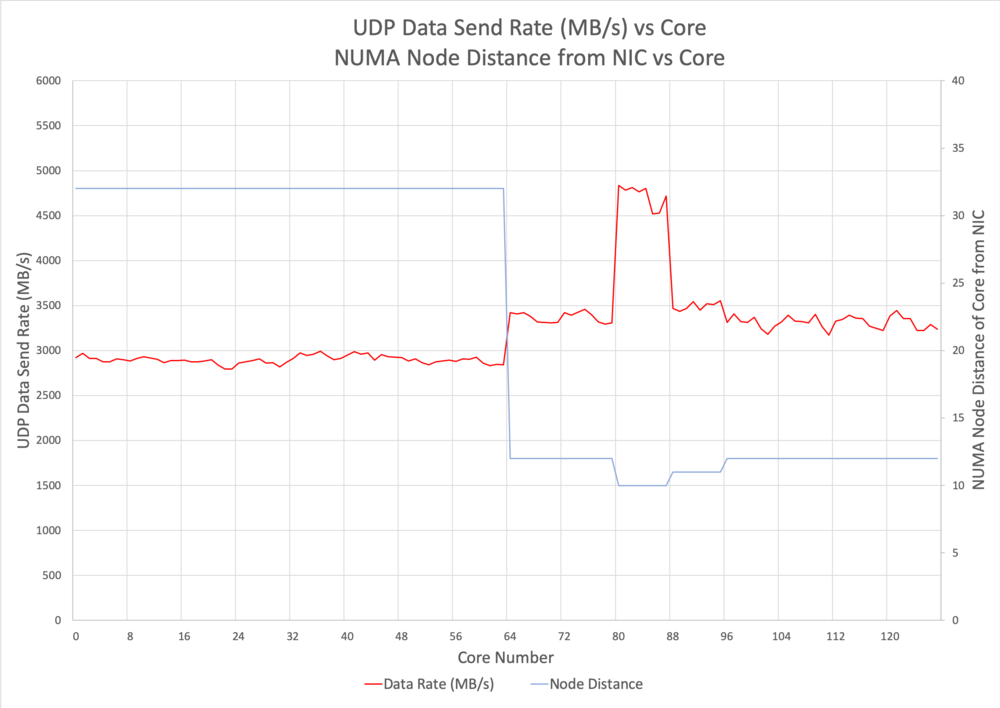

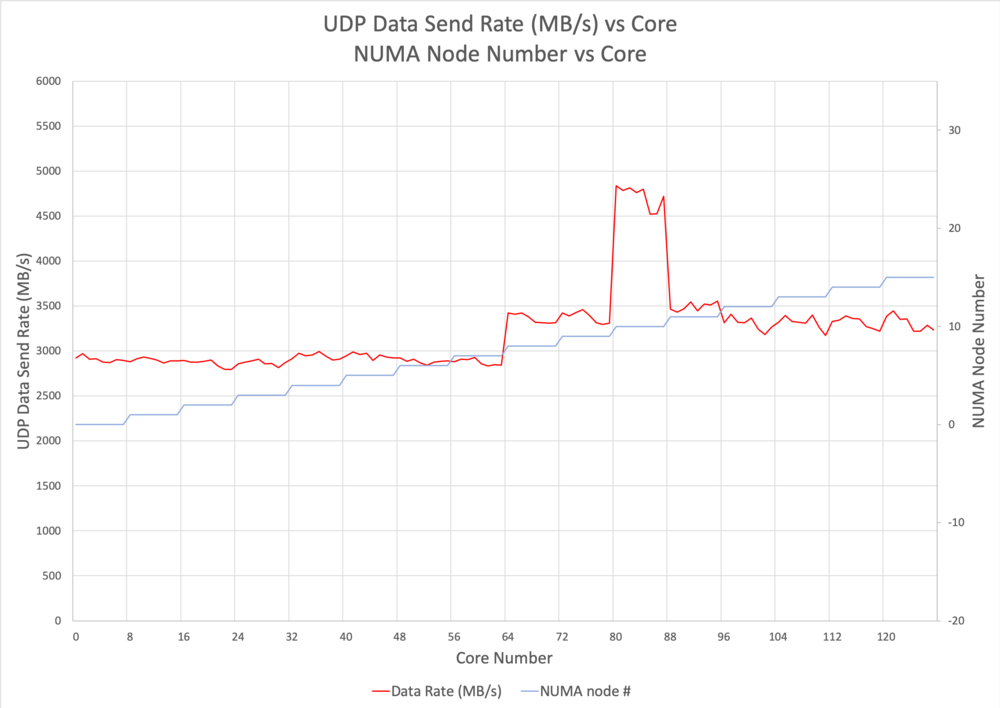

The following graphs were created by a single UDP packet sending application. Data was sent from ejfat-2 to LB on ejfat-1 (172.19.22.241), using

./packetBlaster -p 19522 -host 172.19.22.241 -mtu 9000 -s 25000000 -b 100000 -cores <N>

in which the UDP Send buffer = 50MB and the app sent buffers of 100kB.

Conclusions

Notice how the data rate spikes when the core used to send is in the same NUMA node as the NIC itself. In addition, the faster rates always corresponded to the closer NUMA node. Setting the sender's core to get the best performance is a necessity as running the program without specifying it defaults to a low core # such as 8 or 10. The core must be specified to be in the range 80-87 in order to get the best performance. Note that running in realtime RR scheduling made the program considerably slower and uneven in performance. It would not run in the realtime FIFO mode at all.

Addendum

The NIC on ejfat-3 has been moved!. It's NUMA node # is now 6, so it communicates fastest using cores 48 49 50 51 52 53 54 55 (when starting with 0).