Difference between revisions of "EJFAT"

| Line 80: | Line 80: | ||

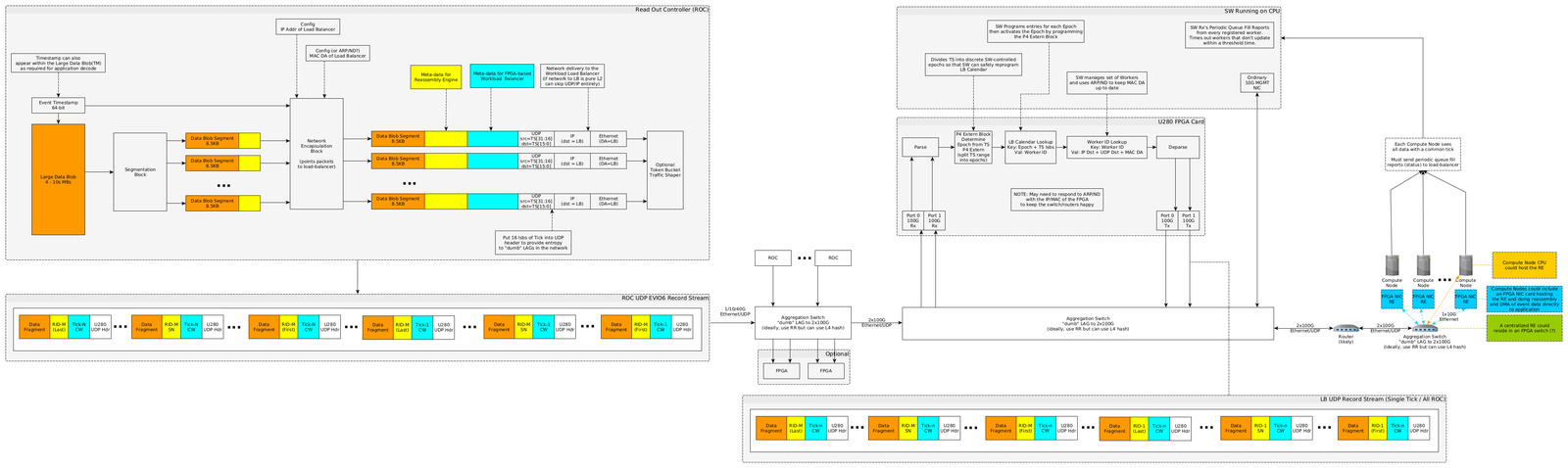

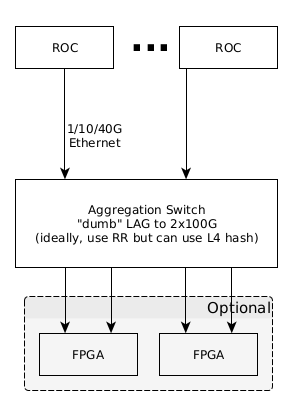

Individual ROC channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X). | Individual ROC channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X). | ||

If the LAG configured switch proves to be incapable of meeting line rate throughput (100Gbs), then an additional FPGA(s) can be engineered to subsume this function as depicted in Figure X. | If the LAG configured switch proves to be incapable of meeting line rate throughput (100Gbs), then an additional FPGA(s) can be engineered to subsume this function as depicted in Figure X. | ||

| + | |||

[[File:Esnet-JLab-network-diagram-v002a-roc-1.png|border|"ROC Channel Load Balancing"]] | [[File:Esnet-JLab-network-diagram-v002a-roc-1.png|border|"ROC Channel Load Balancing"]] | ||

Revision as of 19:24, 11 November 2021

Abstract

We describe a collaboration between Energy Sciences Network (ESnet) and Thomas Jefferson National Laboratory (JLab) for proof of concept engineering to program a Field Programmable Gate Array (FPGA) for network data routing of commonly tagged UDP packets to individual and configurable destination endpoints in a follow-on compute work load balanced manner, including some additional tagging for stream reassembly at the endpoint. The primary purpose of this FPGA based acceleration is to load balance work to destination compute farm endpoints with low latency and full line rate bandwidth of 100 Gbs with feedback (back-pressure) from the destination compute farm. ESnet used P4 programming on the FPGA to use meta-data in the UDP packet stream to route packets with a common tag to dynamically configurable endpoints controlled by the endpoint farm. Control plane programming tasks included back-pressure notifications from destination endpoints and notification processing by the FPGA host CPU to dynamically re-configure routing tables for the FPGA P4 code.

EJFAT Overview

This collaboration between ESnet and JLab for FPGA Accelerated Transport (EJFAT) seeks a network data transport capability to aggregate and dynamically route selected UDP traffic with endpoint feedback. EJFAT will add meta-data to UDP data streams to be used both by the intervening FPGA, acting as a work Load Balancer (LB), to aggregate data packets from multiple logical input streams and dynamically route to endpoints and for an endpoint Reassembly Engine (RE) to perform custom reassembly resulting from network equipment fragmentation. While the aggregation and routing meta-data included as the header in the payload is generic in design, it is being first utilized for streamed (non-triggered) data from the JLab DAQ to the back-end compute farm. In the initial JLab deployment context, the FPGA will time-stamp aggregate across detector Data Acquisition System (DAQ) channels for the purpose of load balancing work to individual compute farm destinations in a farm status aware manner (see Figure X in Appendix X), where work here is concerned with using data from an individual time-stamp across all DAQ channels to identify or reconstruct detector events. This load balancing of computational work is under direct control of the compute farm via dynamic management of routing information communicated to the FPGA host CPU which is passed on to the FPGA. As the aggregated/routed data is opaque to this design, it should be reusable for other data streams with aggregation/routing needs.

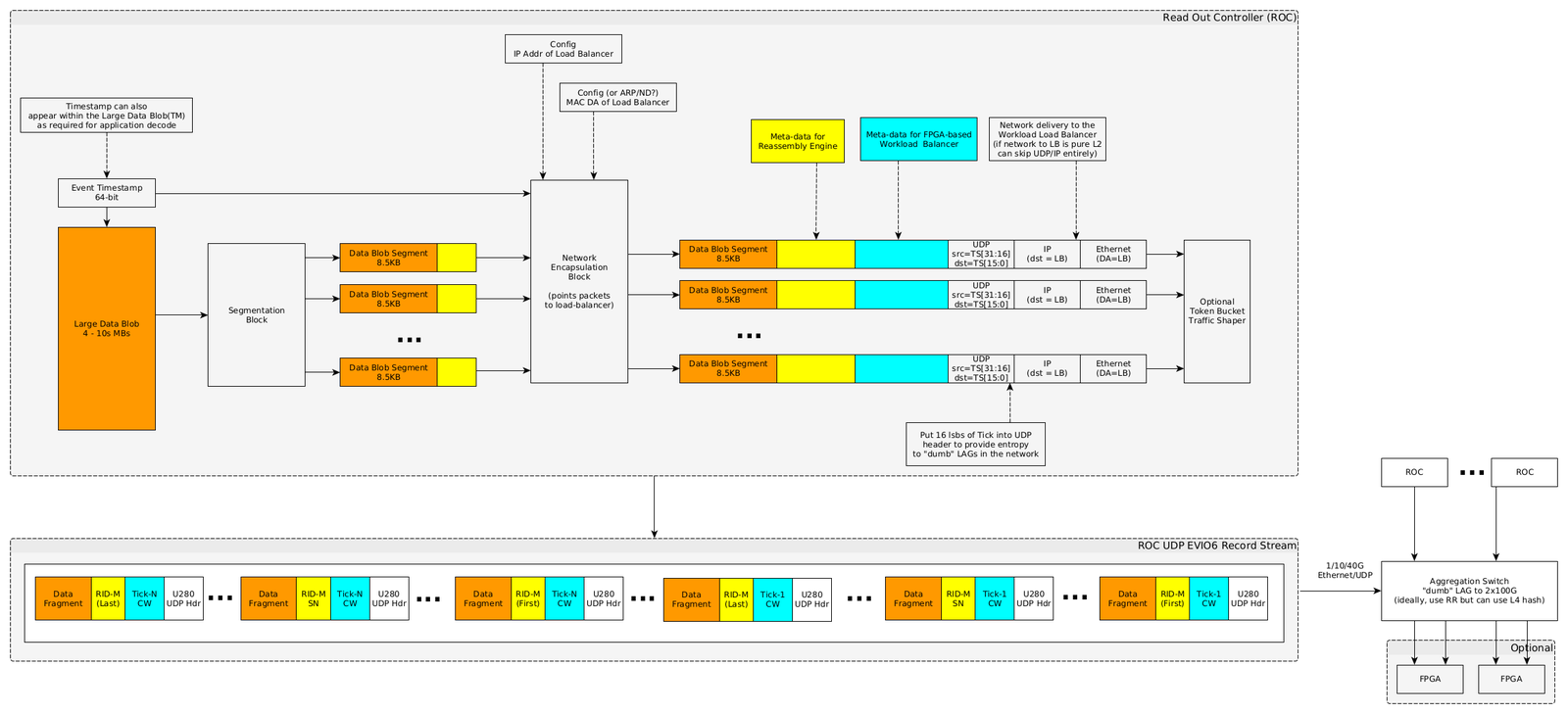

Read Out Controller Processing

The Read Out Controllers (ROC) of the JLab DAQ system will be enhanced to stream data via UDP and include new meta-data prepended to the original payload that serves the needs of the compute destination LB and the destination fragmentation RE. Figure X is a diagram of the new data stream processing requirements for the JLaB DAQ system. This new meta-data, populated by the JLab DAQ system, consists of two parts, the first for the LB and the second for the RE.

Load Balancer Meta-Data

The LB meta-data (Figure X, cyan section) is 96 bits that in order consists of

- is 32 bits (bits 0-31) such that

- bits 0-7 ASCII character ’L’

- bits 8-15 ASCII character ’B’

- bits 16-23 LB version number starting at 1 (constant for run duration)

- bits 24-31 Protocol Number (very useful for protocol decoders e.g., wireshark/tshark )

- (Time stamp or proxy) is a 64 bit quantity (bits 32-95) that for a DAQ run duration

- Monotonically increases

- Unique

- Never rolls over

- Never resets

- Serves as a common tag across multiple DAQ ROC channels/packets related to the same time-stamped data transfer.

In standard IETF RFC format:

protocol 'L:8,B:8,Version:8,Protocol:8,Tick:64' 0 1 2 3 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | L | B | Version | Protocol | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | | + Tick + | | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

Reassembly Engine Meta-Data

The RE meta-data (Figure X, yellow section) is 64 bits and consists of

- bits 0-3 Version number

- bits 4-13 Reserved

- bit 14 indicates first packet

- bit 15 indicates last packet

- bits 16-31 ROC Id

- bits 32-63 packet sequence number or optionally data offset byte number for reassembly

In standard IETF RFC format:

protocol 'Version:4,Rsvd:10,First:1,Last:1,ROC-ID:16,Offset:32' 0 1 2 3 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 2 3 4 5 6 7 8 9 0 1 +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ |Version| Rsvd |F|L| ROC-ID | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+ | Offset | +-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+-+

The resultant DAQ data stream is shown just below the block diagram in Figure X and depicts the stream UDP packet structure from the DAQ system to the LB. Individual packets are meta-data tagged both for the LB, to route based on tick to the proper compute node, and for the RE with packet offset spanning the collection of packets for a single tick for eventual destination reassembly.

The depicted sequence is only illustrative, and no assumption about the order of packets with respect to either tick or offset should be made by the LB or the RE.

UDP Header

The UDP Header will be populated as follows:

- : lower 16 bits of Load Balancer Tick (for LAG switch entropy)

- : Value that indicates LB should perform load balancing (else packet is discarded)

The resultant DAQ data stream is shown below the block diagram and depicts the stream UDP packet structure. Individual packets

ROC Aggregation Switch

Individual ROC channels will be aggregated for maximum throughput by a switch using Link Aggregation Protocol (LAG) or similar where the network traffic downstream of the switch will be addressed to the LB FPGA (see (Figure X, Appendix X). If the LAG configured switch proves to be incapable of meeting line rate throughput (100Gbs), then an additional FPGA(s) can be engineered to subsume this function as depicted in Figure X.

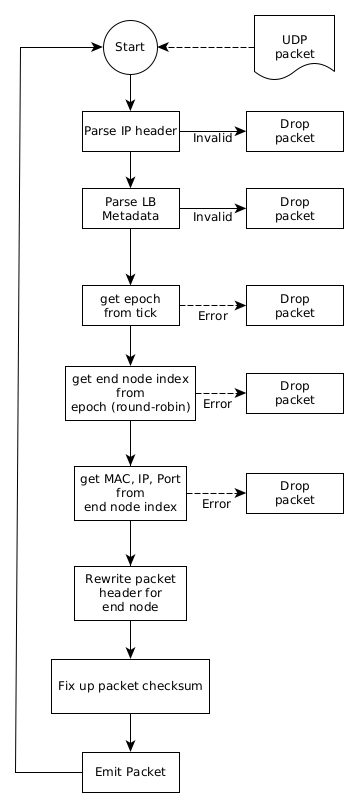

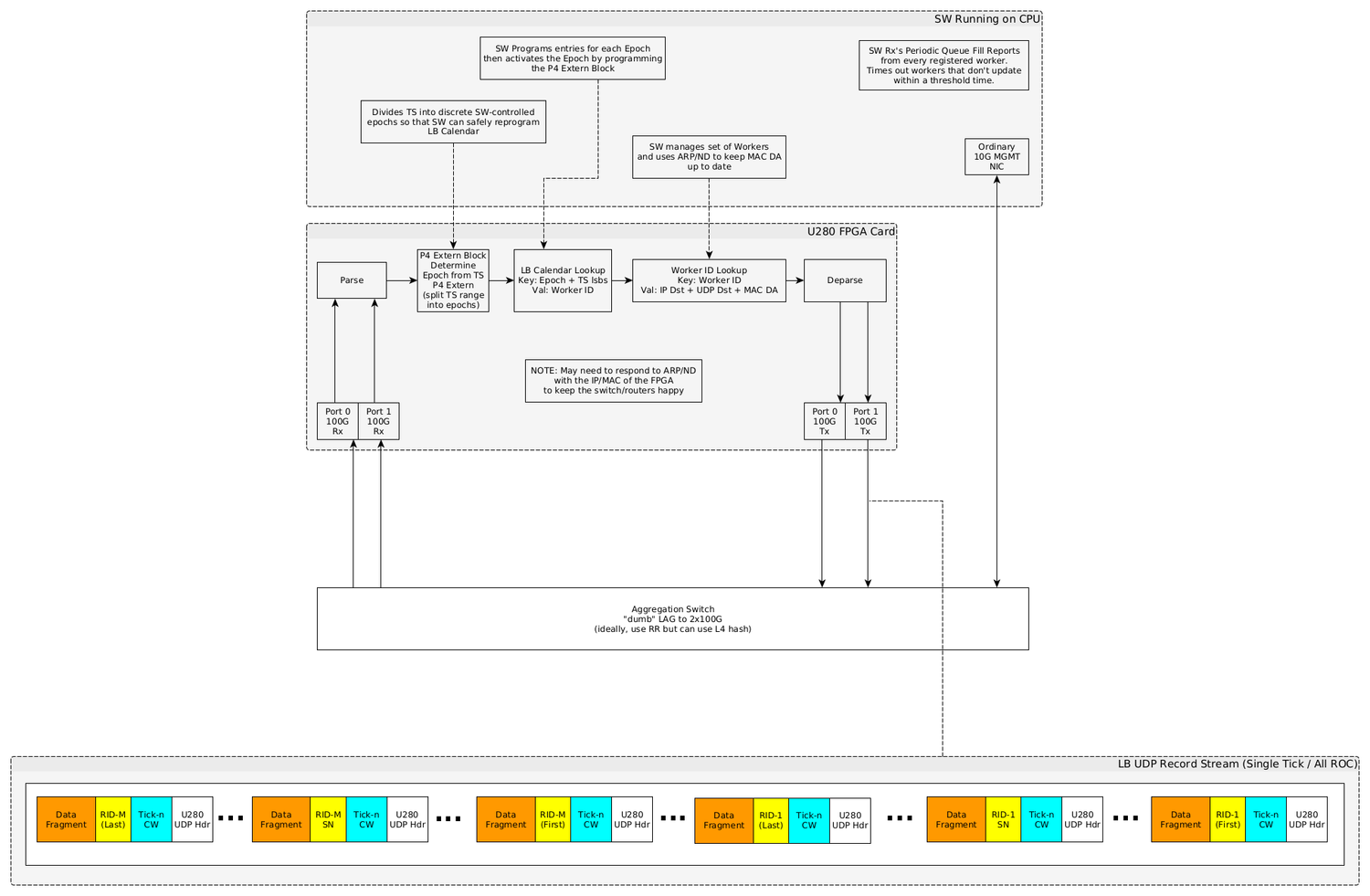

LB Processing

The FPGA resident LB aggregates data across all DAQ channels for a single discrete tick and routes this aggregated data to individual end compute nodes in cooperation with the FPGA host chassis CPU using algorithms designed for the host CPU and feedback received from the end compute node farm, maintaining complete opacity of the UDP payload to the LB (except for the LB meta-data).

Data Plane (FPGA) Processing

- Packet Parsing Stage

- Headers defined in the previous stage will be parsed and made available for the remaining stages.

- The Event Payload MUST NOT be parsed by the load balancer.

- Input Packet Filter Stage

- Implemented as a P4 table with the following properties

- Max Entries: 32

- Key:

- (Exact Match) EtherType

- (Exact Match) (96b 0 ∥ IPv4 Dst) OR IPv6 Dst

- (Binary Match) UDP Dst Port

- Value: None

- A miss in this table MUST result in the packet being discarded since it is not intended for the load balancer.

- The P4 code in this stage must also check both the Magic and Version fields in the LB Header. A mismatch from the expected values MUST result in the packet being discarded.

- Calendar Epoch Assignment Stage

- Implemented as a P4 table with the following properties

- Max Entries: 128

- Key: (Ternary Match) 64b LB Event Number (Timestamp)

- Value: 32b Calendar Epoch

- Load Balance Calendar to Member Map Stage

- Implemented as a P4 table with the following properties

- Max Entries: 2048

- Key:

- (Exact Match) 32b Calendar Epoch

- (Exact Match) 9b Calendar Slot (ie. LB Event Number & 0x1FF)

- Value: 16b LB Member ID

- Load Balance Member Info Lookup Stage

- Implemented as a P4 table with the following properties

- Max Entries: 1K

- Key:

- (Exact Match) 16b EtherType (IPv4 or IPv6)

- (Exact Match) 16b LB Member ID

- Value:

- 8b Action ID

- 1 = IPv4 Rewrite

- 2 = IPv6 Rewrite

- IPv4 Rewrite Action

- 48b MAC DA (for next-hop router)

- 32b IPv4 Dst

- 16b UDP Dst Port

- IPv6 Rewrite Action

- 48b MAC DA (for next-hop router)

- 128b IPv6 Dst

- 16b UDP Dst Port

- 8b Action ID

- Rewrite Action type must match the input packet’s EtherType. E.g. An Input IPv4 packet cannot use the IPv6 Rewrite Action and vice versa.

- Before applying the rewrite actions, the original packet’s MAC DA should be copied into the MAC SA. This will ensure that the outgoing packet will be sent from exactly the MAC address that the original packet was destined to. This will help to keep the MAC FDB entries in the adjacent switches from expiring.

- Packets will be transmitted back out the port they were received on.

The resultant LB data stream is shown just above the block diagram in Figure X and depicts the stream UDP packet structure from the LB to the RE concerning an arbitrary single destination compute node. Individual packets here are still meta-data tagged both for the LB and RE. The RE for a target compute node will see a collection of packets that share a common tick. The depicted sequence is only illustrative, and no assumption about the order of packets with respect to the offset should be made by the RE.

LB Data Plane P4 Code

1 // -*- Mode: c -*-

2 #include <core.p4>

3 #include <xsa.p4>

4

5 struct intrinsic_metadata_t {

6 bit<64> ingress_global_timestamp;

7 bit<64> egress_global_timestamp;

8 bit<16> mcast_grp;

9 bit<16> egress_rid;

10 }

11

12 header ethernet_t {

13 bit<48> dstAddr;

14 bit<48> srcAddr;

15 bit<16> etherType;

16 }

17

header ipv6_t {

bit<4> version;

bit<8> trafficClass;

bit<20> flowLabel;

bit<16> payloadLen;

bit<8> nextHdr;

bit<8> hopLimit;

bit<128> srcAddr;

bit<128> dstAddr;

}

header ipv4_t {

bit<4> version;

bit<4> ihl;

bit<8> diffserv;

bit<16> totalLen;

bit<16> identification;

bit<3> flags;

bit<13> fragOffset;

bit<8> ttl;

bit<8> protocol;

bit<16> hdrChecksum;

bit<32> srcAddr;

bit<32> dstAddr;

}

header ipv4_opt_t {

varbit<320> options; // IPv4 options - length = (ipv4.hdr_len - 5) * 32

}

header udp_t {

bit<16> srcPort;

bit<16> dstPort;

bit<16> totalLen;

bit<16> checksum;

}

header udplb_t {

bit<16> magic; /* "LB" */

bit<8> version; /* version 0 */

bit<8> proto;

bit<64> tick;

}

#define SIZEOF_UDPLB_HDR 12

struct short_metadata {

bit<64> ingress_global_timestamp;

bit<9> egress_spec;

bit<1> processed;

bit<16> packet_length;

}

struct headers {

ethernet_t ethernet;

ipv4_t ipv4;

ipv4_opt_t ipv4_opt;

ipv6_t ipv6;

udp_t udp;

udplb_t udplb;

}

// User-defined errors

error {

InvalidIPpacket,

InvalidUDPLBmagic,

InvalidUDPLBversion

}

parser ParserImpl(packet_in packet, out headers hdr, inout short_metadata short_meta, inout standard_metadata_t smeta) {

state start {

transition parse_ethernet;

}

state parse_ethernet {

packet.extract(hdr.ethernet);

transition select(hdr.ethernet.etherType) {

16w0x0800: parse_ipv4;

16w0x86dd: parse_ipv6;

default: accept;

}

}

state parse_ipv4 {

packet.extract(hdr.ipv4);

verify(hdr.ipv4.version == 4 && hdr.ipv4.ihl >= 5, error.InvalidIPpacket);

packet.extract(hdr.ipv4_opt, (((bit<32>)hdr.ipv4.ihl - 5) * 32));

transition select(hdr.ipv4.protocol) {

8w17: parse_udp;

default: accept;

}

}

state parse_ipv6 {

packet.extract(hdr.ipv6);

verify(hdr.ipv6.version == 6, error.InvalidIPpacket);

transition select(hdr.ipv6.nextHdr) {

8w17: parse_udp;

default: accept;

}

}

state parse_udp {

packet.extract(hdr.udp);

transition select(hdr.udp.dstPort) {

16w0x4c42: parse_udplb;

default: accept;

}

}

state parse_udplb {

packet.extract(hdr.udplb);

verify(hdr.udplb.magic == 0x4c42, error.InvalidUDPLBmagic);

verify(hdr.udplb.version == 1, error.InvalidUDPLBversion);

transition accept;

}

}

control MatchActionImpl(inout headers hdr, inout short_metadata short_meta, inout standard_metadata_t smeta) {

//

// DstFilter - a gate

//

bit<128> meta_ipdst = 128w0;

action drop() {

smeta.drop = 1;

}

table dst_filter_table { // layer 2/3 filter

actions = {

drop;

NoAction;

}

key = {

hdr.ethernet.dstAddr : exact; // this a unicast packet for us at layer 2 (MAC) ?

hdr.ethernet.etherType : exact // ipv4 or ipv6

meta_ipdst : exact; // normalized 128b IP (not MAC) address from either ipv4/6

}

size = 32;

default_action = drop;

}

//

// EpochAssign

//

bit<32> meta_epoch = 0;

action do_assign_epoch(bit<32> epoch) {

meta_epoch = epoch;

}

table epoch_assign_table {

actions = {

do_assign_epoch;

drop;

}

key = {

hdr.udplb.tick : lpm; // lpm is the keystone to routing everywhere

}

size = 128;

default_action = drop;

}

//

// LoadBalanceCalendar

//

// Use lsbs of tick to select a calendar slot

bit<9> calendar_slot = (bit<9>) hdr.udplb.tick & 0x1FF; # 9 bits

bit<16> meta_member_id = 0;

action do_assign_member(bit<16> member_id) {

meta_member_id = member_id;

}

table load_balance_calendar_table {

actions = {

do_assign_member;

drop;

}

key = {

meta_epoch : exact;

calendar_slot : exact;

}

size = 2048;

default_action = drop;

}

//

// MemberInfoLookup

//

// Cumulative checksum delta due to field rewrites

bit<16> ckd = 0;

bit<16> new_udp_dst = 0;

action cksum_sub(inout bit<16> cksum, in bit<16> a) {

bit<17> sum = (bit<17>) cksum;

sum = sum + (bit<17>)(a ^ 0xFFFF);

sum = (sum & 0xFFFF) + (sum >> 16);

cksum = sum[15:0];

}

action cksum_add(inout bit<16> cksum, in bit<16> a) {

bit<17> sum = (bit<17>) cksum;

sum = sum + (bit<17>)a;

sum = (sum & 0xFFFF) + (sum >> 16);

cksum = sum[15:0];

}

action cksum_swap(inout bit<16> cksum, in bit<16> old, in bit<16> new) {

cksum_sub(cksum, old);

cksum_add(cksum, new);

}

action do_ipv4_member_rewrite(bit<48> mac_dst, bit<32> ip_dst, bit<16> udp_dst) {

// Calculate IPv4 and UDP pseudo header checksum delta using rfc1624 method

cksum_swap(ckd, hdr.ipv4.dstAddr[31:16], ip_dst[31:16]);

cksum_swap(ckd, hdr.ipv4.dstAddr[15:00], ip_dst[15:00]);

cksum_swap(ckd, hdr.ipv4.totalLen, hdr.ipv4.totalLen - SIZEOF_UDPLB_HDR);

// Apply the accumulated delta to the IPv4 header checksum

hdr.ipv4.hdrChecksum = hdr.ipv4.hdrChecksum ^ 0xFFFF;

cksum_add(hdr.ipv4.hdrChecksum, ckd);

hdr.ipv4.hdrChecksum = hdr.ipv4.hdrChecksum ^ 0xFFFF;

hdr.ethernet.dstAddr = mac_dst;

hdr.ipv4.dstAddr = ip_dst;

hdr.ipv4.totalLen = hdr.ipv4.totalLen - SIZEOF_UDPLB_HDR;

new_udp_dst = udp_dst;

}

action do_ipv6_member_rewrite(bit<48> mac_dst, bit<128> ip_dst, bit<16> udp_dst) {

// Calculate UDP pseudo header checksum delta using rfc1624 method

cksum_swap(ckd, hdr.ipv6.dstAddr[63:48], ip_dst[63:48]);

cksum_swap(ckd, hdr.ipv6.dstAddr[47:32], ip_dst[47:32]);

cksum_swap(ckd, hdr.ipv6.dstAddr[31:16], ip_dst[31:16]);

cksum_swap(ckd, hdr.ipv6.dstAddr[15:00], ip_dst[15:00]);

cksum_swap(ckd, hdr.ipv6.payloadLen, hdr.ipv6.payloadLen - SIZEOF_UDPLB_HDR);

hdr.ethernet.dstAddr = mac_dst;

hdr.ipv6.dstAddr = ip_dst;

hdr.ipv6.payloadLen = hdr.ipv6.payloadLen - 12;

new_udp_dst = udp_dst;

}

table member_info_lookup_table {

actions = {

do_ipv4_member_rewrite;

do_ipv6_member_rewrite;

drop;

}

key = {

hdr.ethernet.etherType : exact;

meta_member_id : exact;

}

size = 1024;

default_action = drop;

}

// Entry Point

apply {

bool hit;

//

// DstFilter

//

// Normalized IP destination address for both ipv4 and ipv6

if (hdr.ipv4.isValid()) {

meta_ipdst = (bit<96>) 0 ++ (bit<32>) hdr.ipv4.dstAddr;

} else if (hdr.ipv6.isValid()) {

meta_ipdst = hdr.ipv6.dstAddr;

}

// .apply() looks up key and applies action specified by control plane on match

hit = dst_filter_table.apply().hit;

if (!hit) {

return;

}

//

// EpochAssign

//

if (!hdr.udplb.isValid()) {

return;

}

hit = epoch_assign_table.apply().hit;

if (!hit) {

return;

}

//

// LoadBalanceCalendar

//

hit = load_balance_calendar_table.apply().hit;

if (!hit) {

return;

}

//

// MemberInfoLookup

//

hit = member_info_lookup_table.apply().hit;

if (!hit) {

return;

}

//

// UpdateUDPChecksum

//

// Calculate UDP pseudo header checksum delta using rfc1624 method

cksum_swap(ckd, hdr.udp.dstPort, new_udp_dst);

cksum_swap(ckd, hdr.udp.totalLen, hdr.udp.totalLen - SIZEOF_UDPLB_HDR);

// Subtract out the bytes of the UDP load-balance header

cksum_sub(ckd, hdr.udplb.magic);

cksum_sub(ckd, hdr.udplb.version ++ hdr.udplb.proto);

cksum_sub(ckd, hdr.udplb.tick[63:48]);

cksum_sub(ckd, hdr.udplb.tick[47:32]);

cksum_sub(ckd, hdr.udplb.tick[31:16]);

cksum_sub(ckd, hdr.udplb.tick[15:00]);

// Write the updated checksum back into the packet

hdr.udp.checksum = hdr.udp.checksum ^ 0xFFFF;

cksum_add(hdr.udp.checksum, ckd);

hdr.udp.checksum = hdr.udp.checksum ^ 0xFFFF;

// Update destination port and fix up length to adapt to dropped udplb header

hdr.udp.dstPort = new_udp_dst;

hdr.udp.totalLen = hdr.udp.totalLen - SIZEOF_UDPLB_HDR;

}

}

control DeparserImpl(packet_out packet, in headers hdr, inout short_metadata short_meta, inout standard_metadata_t smeta) {

apply {

packet.emit(hdr.ethernet);

packet.emit(hdr.ipv4);

packet.emit(hdr.ipv4_opt);

packet.emit(hdr.ipv6);

packet.emit(hdr.udp);

}

}

XilinxPipeline(

ParserImpl(),

MatchActionImpl(),

DeparserImpl()

) main;

- lines 13-14: These are MAC addresses

- line 45: IPV4 options length = (ipv4 × hdrlen - 5) × 32

- line 56: literally “LB” = 0x4c42; c.f. section [sec:Load Balancer Meta-Data] and line 8 section [sec:rocpcap].

- line 57: LB version; c.f. line 9 section [sec:rocpcap]

- line 58: protocol; c.f. line 10 section [sec:rocpcap], section [sec:roc]

- line 150, 301: Gate to ensure in packet is for LB

- line 174: epoch is determined by largest prefix match of tick; c.f. section X lines 18,25 for epochs 0,1 respectively

- line 185: lower 9 bits of tick used for member slot indexing on round-robin basis; all 512 member slots require population for all active epochs; c.f. line 33 section [sec:lbcntrlpln]

LB Control Plane

This is the Vivado P4 simulator control plane configuration script:

- lines 1,8: Sets the LB MAC address, IP address for IPV4, IPV6 respectively.

- line 15: Set up Epoch 0 to match all ticks at low priority (=64)

- line 22: Set up Epoch 1 to match ticks for which the 2nd least significant nibble=1 and the most significant nibble is arbitrary designating 16 possible tick values for Epoch 1 at higher priority (=5).

- line 29: Designate member (end-node) 0 for calendar slot 0xa, Epoch 0

- line 36: Designate member (end-node) 0 for calendar slot 0x14, Epoch 1

- line 43: Designate member 0 for IPV4 packets as MAC=0x11223344556, IP=0xaabbccdd, Port=0x4556

- line 52: Designate member 0 for IPV6 packets as MAC=0x11223344556,IP=0xfe8000000000000000000000000000, Port=0x4556

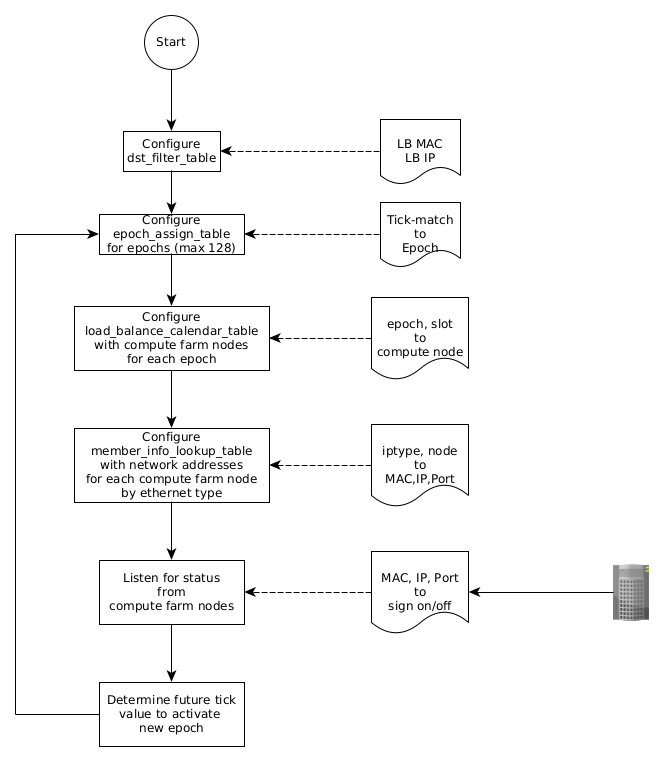

Control Plane (Host CPU) Processing

- Software Initialization Steps

- PROPOSAL: A low-level C Software library will be provided to allow insertion/deletion of table entries into each of the P4 tables. All other SW will likely need to be written by the user of the LB pipeline.

- Populate Input Packet Filter Table

- Table Insert ⟨0x800, LB IPv4 Addr, LB UDP Dst⟩

- Table Insert ⟨0x86dd, LB IPv6 Addr, LB UDP Dst⟩

- Populate Load Balance Member Table: For each LB Member

- Allocate next free Member ID number from SW pool

- Table Insert

- K: ⟨0x800, Member ID⟩

- V: ⟨IPv4 Rewrite, 0, Next-Hop MAC DA, Worker IPv4 Dst, Worker UDP Dst Port⟩

- Table Insert

- K: ⟨0x86dd, Member ID⟩

- V: ⟨IPv6 Rewrite, 0, Next-Hop MAC DA, Worker IPv6 Dst, Worker UDP Dst Port⟩

- Populate Load Balance to Member Map Table

- Allocate next free Calendar Epoch number from SW pool

- Assign all active LB Member IDs to the 512 Calendar Slots

- Any members can occur between 0-512 times in the calendar

- A member occurring more times in the calendar has a higher “weight” and will be more likely to be assigned an event within this Calendar Epoch

- All 512 slots MUST have a member assigned to them or events that target the empty slot will be entirely discarded by the load balancer

- For each Calendar Slot Table Insert

- K: ⟨Calendar Epoch, Calendar Slot⟩

- V: ⟨LB Member ID⟩

- Populate the Calendar Epoch Assignment Table

- Assign all Event IDs (Timestamps) to the newly allocated Calendar Epoch

- Table Insert

- K: ⟨*⟩

- V: ⟨Calendar Epoch⟩

- The load balancer will now assign each Rx’d packet to exactly one of the LB members based on the Event ID contained in the packet. The mapping will remain consistent for any given Event ID within an Epoch since the Calendar and Member tables cannot change within a given Epoch.

- Making Changes to the Load Balancer ConfigurationThis section assumes that the load balancer is in-service and as such, care must be taken to avoid service disruption during reconfiguration. If the load balancer is out-of-service, you can reconfigure it using the Initialization steps above without care for disruption.

Any Epoch that is reachable (connected) via the Calendar Epoch Assignment table MUST not be changed. In-service reconfiguration of the load balancer is done by the following steps.

- Allocate the next free Calendar Epoch ID: Once we’re done all the rest of the updates, we’ll activate this new Epoch

- Insert new entries into the Load Balance Member Table for any entries that need to be different in the next Epoch

- Compute and insert an entirely new calendar into the Load Balance to Member Map Table using the next Calendar Epoch ID

- Choose an Event ID in the (near) future which will become the boundary between the current Epoch and the new Epoch.

- Compute a set of Ternary prefix matches over the Event ID space which describe the entire range of Event IDs from the start of the current Epoch up to the start of the new Epoch.

- Program the ternary prefix matches into the Calendar Epoch Assignment Table

- Update the wildcard match in the Calendar Epoch Assignment Table to point to the new Epoch

- The new Epoch is activated and MUST NOT be changed

- After waiting an appropriate time for all events from the previous Epoch to have quiesced, perform the following cleanup steps.

- Delete the ternary prefix matches for the previous Epoch from the Calendar Epoch Assignment Table. This disconnects all references for the previous Epoch to the rest of the pipeline tables.

- Delete the Calendar for the previous Epoch

- Delete the Member rewrites for the previous Calendar

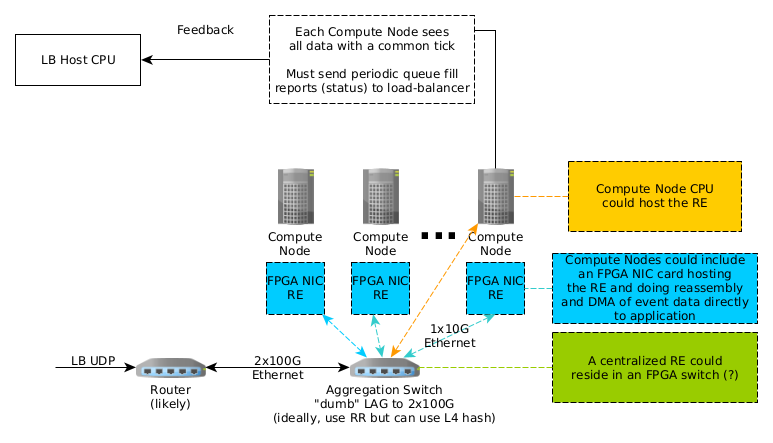

Reassembly Engine Processing

Time-Stamp aggregated data transferred through network equipment will be fragmented and require reassembly by the RE on behalf of the targeted compute farm destination node. Several candidate designs are being considered as depicted in Figure x:

- RE resident () in each compute node in an FPGA accelerated Network Interface Card (NIC) (e.g., Xilinx SN1000)

- RE resident () in each compute node CPU operating system.

- RE centralized () in an FPGA residing in a compute farm switch.

Additionally, end compute nodes are individually responsible for informing the LB host CPU of status such that the LB host CPU can make informed decisions in comprising an effective load balancing strategy for the FPGA resident LB.

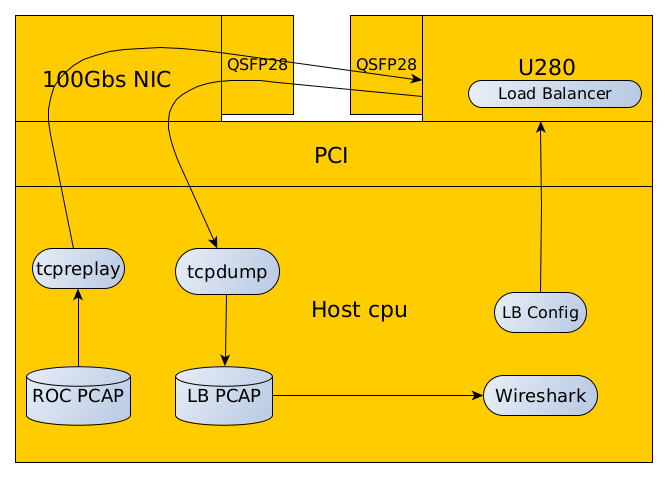

Initial Test Configuration

Figure X depicts a notional EJFAT initial test configuration using commonly available Unix utilities and not initially using a network switch. The sequence of this test configuration is as follows:

- Generate a representative ROC PCAP file using the script in section [sec:rocpcap]. This data should emulate a data stream from the DAQ system exhibiting desired sequences in the stream that will test the functionality of the LB.

- Configure the LB P4 load balancer per the guidelines set forth in section [sec:lbcntrlpln].

- Use tcpreplay to send the ROC PCAP file via the test host CPU NIC into the FPGA resident LB via the FPGA’s (bidirectional) QSFP28 optical port.

- Use tcpdump to capture the LB response sent back into the test host CPU NIC into an LB PCAP file

- Use Wireshark/tshark or other to decode, render, and examine the LB PCAP file to ascertain if the LB provided the correct response. Decoding should be facilitated by using the Protocol field in the LB meta-data specified in section [sec:Load Balancer Meta-Data] with add-ins for the bit structures defined in sections [sec:Load Balancer Meta-Data], [sec:Reassembly Engine Meta-Data].

- Use an LB config process running on the host CPU to setup test conditions with the destination compute farm to alter the LBs routing strategy using the information in sections [sec:cntrl-pln], [sec:lbcntrlpln].

ROC PCAP Generator Script

The following Scapy test script can be used to generate the ROC PCAP file shown in Figure X:

Scapy Installation

Scapy may be installed by executing the following command at the Unix command line:

pip3 install --user scapy

ROC PCAP Meta Data File

In the following file: Each row (1...n) in this file matches with the corresponding packet (1...n) in the packets_in.pcap file. ( Lines 42, 46 in section [sec:rocpcap] ) The simulator loads a line from this file and uses it to initialize the short_metadata struct which is processed along with the corresponding packet data. You should find references to “short_metadata” struct in the p4 program. I have no idea what the sim does when it has more packets in the .pcap file than the number of lines in the .meta file. In general, all of these fields may be relevant. For the load balancer specifically, the only field that matters is really the “egress_spec” field. On the way in, it contains the *ingress* port. It isn’t touched by the p4 program since we always want the output packet to go back out the exact same interface that it arrived on (after we rewrite the destination header fields of course). The p4bm simulator does something like this:

open packets_in.pcap for read

open packets_in.meta for read

open packets_out.pcap for write

open packets_out.meta for write

while (!done) {

read packet p from packets_in.pcap

read metadata row m from packets_in.meta

(out_packet, out_meta) = simulate_the_p4(p, m)

write out_packet to packets_out.pcap

write out_meta to packets_out.meta

The pcap-generator.py script isn’t directly used by the p4bm sim. That’s just something I wrote to help you to make up input packets for the simulator so you didn’t have to make your input packets (as seen in packets_in.pcap) by hand. There is a 1:1 correspondence between calls to write_packet() in the pcap-generator.py script and packets in the packets_in.pcap file. The .py program spits out 2 interleaved ROC transfers (one transfer is carried in IPv4, the other is in IPv6). Each ROC transfer starts out as 1050 bytes of EVIO6 data. It is then segmented into 100 byte segments, ready to be put into a packet. Each segment gets a EVIO6 Segmentation header added to keep track of which segment it is, then each segment gets a UDP Load Balancer header added to give the load-balancer its context. Each call to write_packet() spits out one of the segments in either a IPv4 or IPv6 encapsulation. The program might be more clear if you were to duplicate the loop (one loop for IPv4 and one loop for IPv6) and put a single call to write_packet() in each of the loops. That might make it more clear that it was 2 transfers since they would no longer be interleaved. The 2 lines in packets_in.meta will be used for the first 2 packets in the packets_in.pcap file. As currently written, one of those lines will be for the first segment in IPv4 and the second line will be for the first segment in IPv6. As I mentioned before, I’m actually not certain what happens after that in the simulator. In practice, it shouldn’t be important for this pipeline since it doesn’t get modified by the p4 program at all.

Tshark Plug-in for LB Meta-Data

In the following file:

Tshark Plug-in for Payload Seg

In the following file: 99 Author, A.N and Another, A. N., 2010, MNRAS, 431, 28.

Appendix: EJFAT Processing