Run Group B

Shift ScheduleShift ChecklistHot CheckoutBeam Time Accounting

|

Manuals |

Procedures |

JLab Logbooks

|

RC schedule

|

|

| ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

- Note, all non-JLab numbers must be dialed with an area code. When calling from a counting-house landline, dial "9" first.

- To call JLab phones from outside the lab, all 4-digit numbers must be preceded by 757-269

- Click Here to edit Phone Numbers. Note, you then also have to edit the current page to force a refresh.

Click Here to edit Phone Numbers. Note, you then also have to edit this page to force a refresh.

CLAS12 Run Group B, spring 2019

Beam energy 10.6 GeV/10.2 GeV (5 pass)

Important: Document all your work in the logbook!

Remember to fill in the run list at the beginning and end of each run (clas12run@gmail.com can fill the run list)

RC: Stepan Stepanyan

- (757) 575-7540

- 9 575 7540 from Counting Room

- stepanya@jlab.org

PDL: Maurizio Ungaro

- (757) 876-1789

- 9 876-1789 from Counting Room

- ungaro@jlab.org

Run Plan: December 2-3

Press the button when you have stable production beam current DAQ config: PROD66 Trigger file: rgb_v10.trg

References and Standards:(last update 3/13/2019- 12:00 PM) Restoration of Beam to Hall B Nominal Beam Positions

FSD Thresholds

Reference Harp Scans for Beam on Tagger Dump: 2C21 [1], 2C24 [2] Reference Harp Scans for Beam on Faraday Cup: 2C21 [3], 2C24 [4], 2H01 [5] Reference Monitoring Histograms (20 nA, production trigger) [6] Reference Monitoring Histograms (35 nA, production trigger) [7] Reference Scalers and Halo-Counter Rates:

SVT acceptable currents: <10 nA (typically < 1 nA) with no beam and no HV; ~< 400 nA (HV ON, no beam); 10 nA [20], 20 nA [21], 30 nA [22], 40 nA [23], 50 nA [24], 60 nA [25], 75 nA [26]

|

General Instructions:

Every Shift:

Every Run:

|

- Note 1: Be very mindful of the background rates in the halo counters, rates in the detectors, and currents in the SVT for all settings to ensure that they are at safe levels.

The integrated rates on the upstream counters have to be in the range 0-15 Hz (rates up 100 Hz are acceptable) and the rates on the midstream counters have to be in the range 10-20 Hz (acceptable up to 50 Hz) @50 nA. Counting rates in the range of hundreds of Hz may indicate bad beam tune or bleed-through from other Halls.

- Note 2: At the end of each run, follow the standard DAQ restart sequence

"end run", "cancel", "reset", then if the run ended correctly, "download", "prestart", "go". If the run did not end correctly or if any ROCs had to be rebooted, "configure", "download", "prestart", "go".

After each step, make sure it is complete in the Run Control message window. If a ROC has crashed, find which one it is and issue a roc_reboot command and try again. Contact the DAQ expert if there are any questions.

- Note 3: FTOF HVs: The goal is to minimize the number of power cycles of the dividers.

- should be turned off during the initial beam tuning down to the Faraday Cup after CLAS12 has been off for a long shutdown, when doing a Moller run, when doing harp scans, or if there is tuned/pulsed beam in the upstream beamline.

- should be left on after an initial beam tune has been established and if there are only minor steering adjustments and “tweaks” being made.

- if shift workers have doubts what to do with the HVs, they can always contact the TOF on-call expert for advice.

- Note 4: In case of a Torus and/or Solenoid Fast Dump do the following:

- Notify MCC to request beam OFF and to drop Hall B status to Power Permit

- Call Engineering on-call

- Make separate log entry with copies to HBTORUS and HBSOLENOID logbooks. In the "Notify" field add Ruben Fair, Probir Goshal, Dave Kashy and esr-users@jlab.org

- Notify Run Coordinator

- Turn off all detectors

- Note 5: When beam is being delivered to the Faraday Cup:

- the Fast Shut Down elements: Upstream, Midstream, Downstream, BOM, and Solenoid should always be in the state UNMASKED

- No changes to the FSD threshold should be made without RC or beamline expert approval

- Note 6: Any request for a special run or change of configuration has to be approved by the RC & documented

- Note 7: Carefully check the BTA every hour and run the script btaGet.py to print for you what HAS TO BE in BTA for this hour. Edit BTA if it is incorrect.

- Note 8: Reset CLAS12MON frequently to avoid histogram saturation. If CLAS12MON is restarted change MAX scale to 10 (default is 5) for DC normalized occupncy histograms. This would prevent them from saturation. See log entry [28]

- Note 9: Shift workers must check the occupancies! Use this tool to compare to previous runs: [29]

- Note 10 When you have to turn the whole MVT off, do not press "All HV OFF": Instead go to 'Restore settings' from the MVT overview screen:

- SafeMode.snp for beam tuning and Moeller runs

- MV_HV_FullField.snp for full solenoid field

- MV_HV_MidField.snp wgeb solenoid < 4T

- NOTE 11 Check the vacuum periodically, make sure vacuum id not higher than 5e-5

- NOTE 12 Always reset the CFD threshold after all power off/on on the CND CAMAC crate

After the CAMAC crate (camac1) holding the CND CFD boards is switched off for any reason, it is mandatory to reset the associated thresholds typing the following command from any clon machine terminal: $CODA/src/rol/Linux_x86_64/bin/cnd_cfd_thresh -w 0

If this command is failing and the crate is not responding, reboot it as follows: roc_reboot camac1

- NOTE 13 Shift workers should anyway check routinely scalers to verify they update correctly and make a logbook entry if anomalies are observed after starting a new run.

- NOTE 14: To solve common SVT issues see https://logbooks.jlab.org/entry/3615766

- NOTE 15:

For RICH recovery procedures, please see Log entry https://logbooks.jlab.org/entry/3562273. This would apply in the cases of 1. DAQ crash: rich4 is not responding or 2. RICH alarms (LV,missing tile, temperature etc). If it does not work or you are uncertain about what to do, contact the RICH expert on call. Please, note that missing tiles typically occur due to lost communication. Keep in mind that the recovery procedure will kill DAQ. If DAQ is running for other purpose, rather than data taking (for which the RICH acceptance would be important) do not initiate the recovery procedure.

- The most critical parameter for RICH is the temperature of the photosensors. If the temperature rises above limits, an interlock will automatically turn the RICH HV and LV. If this happens, notify the expert on call and keep taking data without RICH.

- NOTE 16: Asymmetry on FTCal ADC Scalers: If the beam current is 5 - 10 nA, and the observed asymmetry is out of specs, i.e. > +-2.5%, check (1) that the beam position is according to specs, (2) the response of the rest of CLAS (compare the scalers of FTOF, CTOF, ECAL, etc. from the Scalers GUI to the reference for that current, as posted above). If positions and other detectors do not show any anomalies, keep running. The ADC scalers require at least ~15 nA for a reliable response.

Webcams |

Manuals |

Epics on the web

|

Runs and Coooking Info |

December 2019 - January 2020 Run

Winter 2019 Run

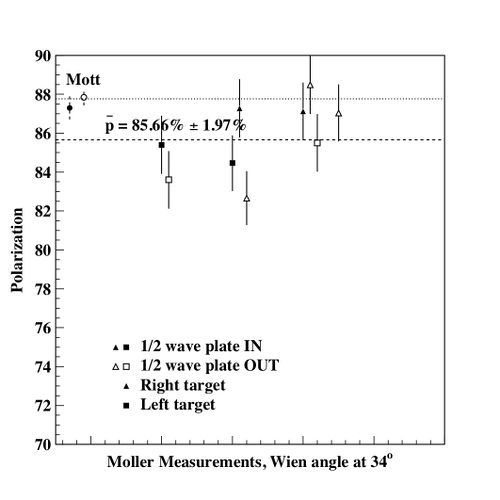

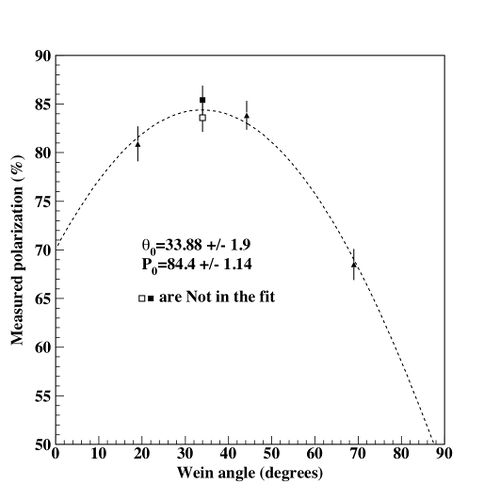

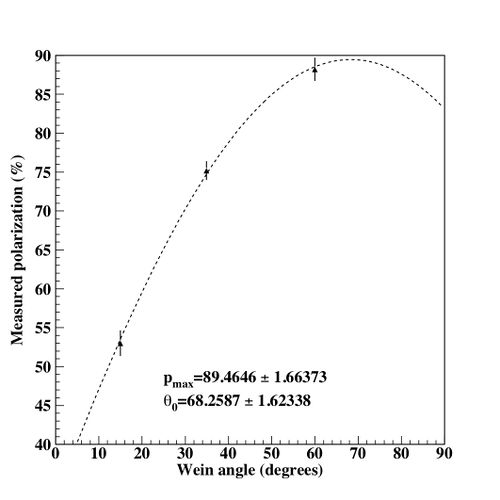

mini-Spin dance

mini-Spin dance

Hall-B |

Accelerator

|

Bluejeans meetings

|